Hi everyone! 👋

The AI conversation has moved beyond model size to architectural fit. As agentic systems enter production, treating LLMs as interchangeable is no longer viable.

LLMs are dividing into specialized architectures, each optimized for reasoning, perception, action, or efficiency. Agent performance now depends on matching the right architecture to the right role.

My aim with this newsletter is to break down the 10 core LLM architectures shaping modern AI agents and how to think about choosing the right one.

GPT (Generative Pretrained Transformer)

Let's start with the OG. GPT-style models are decoder-only transformers that revolutionized natural language processing. They work by predicting the next token given all previous tokens, simple in concept, powerful in execution.

How It Works:

Tokenization: Break text into manageable chunks

Massive pretraining: Learn patterns from billions of documents

Autoregressive generation: Predict one token at a time, using each prediction to inform the next

GPT architectures shine as generalist language models, developing an emergent understanding of context, intent, and language patterns through sheer exposure to human text. This makes them highly effective for natural conversations, ambiguous queries, coherent response generation, and code synthesis.

However, GPTs are fundamentally pattern predictors rather than reasoners. They struggle with multi-step logical thinking and can produce confident but incorrect outputs. They work well for conversational and content-focused agents, but once an agent needs to reason, plan, or verify decisions, GPT alone is no longer sufficient.

Best For: Conversational AI, content generation, code assistants, summarization, general-purpose language tasks

MoE (Mixture of Experts)

Here's where things get interesting. MoE architectures introduce sparse computation instead of running all parameters for every token; a gating network routes each token to a small subset of "expert" networks.

How It Works:

Gating Network: Routes tokens to specific experts

Expert Networks: Specialized feed-forward networks

Sparse Activation: Only top-k experts activated per token

Efficiency: Scale to billions of parameters without proportional compute

What makes MoE brilliant for agents? Cost efficiency at scale. You can build models with 100B+ parameters that only activate 10-20B per forward pass. This matters enormously when you're running thousands of agent interactions per hour. The trade-off? Training complexity and ensuring balanced expert utilization—but when done right, MoE delivers frontier performance at a fraction of the cost.

Best For: High-throughput production systems, multilingual models, cost-sensitive applications at scale

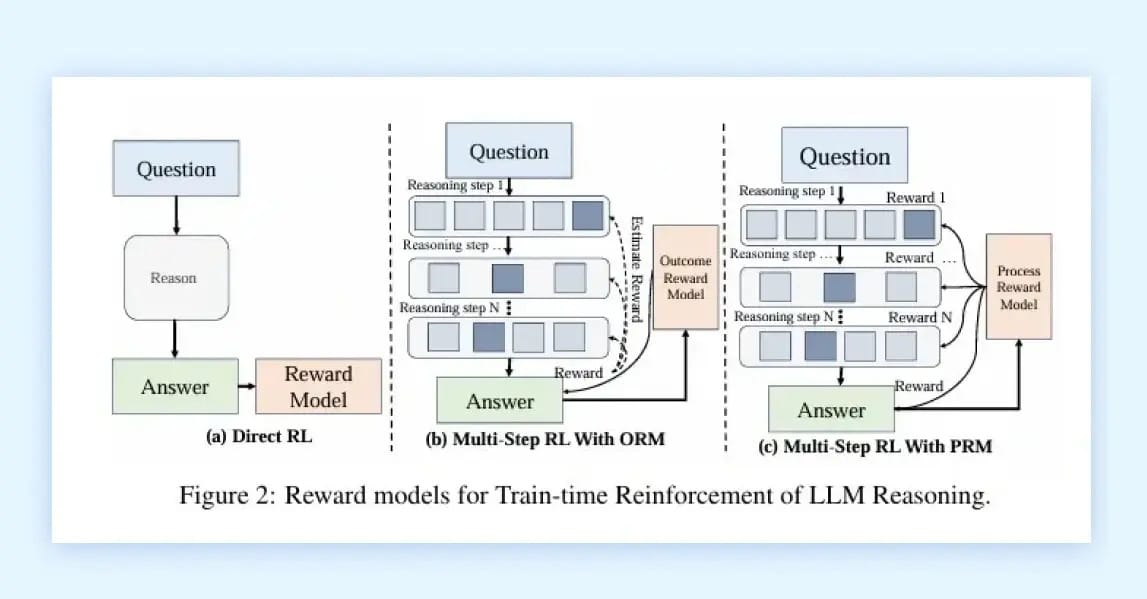

LRM (Large Reasoning Models)

LRMs represent a fundamental shift: these aren't just bigger language models, they're models explicitly trained for multi-step reasoning. Think of them as the difference between pattern matching and actual problem-solving.

How It Works:

Chain-of-Thought: Internal reasoning steps before final answer

Decomposition: Break complex problems into manageable sub-problems

Self-Verification: Models check their own reasoning

Training: Reinforcement learning on reasoning traces

The breakthrough here is training methodology. LRMs use reinforcement learning to reward correct reasoning paths, not just correct answers. This produces models that can tackle novel problems through systematic decomposition rather than memorization. For AI agents handling complex decision-making, financial analysis, medical diagnosis, and strategic planning, LRMs are becoming essential.

The latest models like OpenAI's o1 and DeepSeek-R1 showcase this paradigm: they spend more "time" thinking before responding, producing dramatically better results on tasks requiring genuine reasoning.

Best For: Complex problem-solving, mathematical reasoning, strategic planning, multi-step decision-making

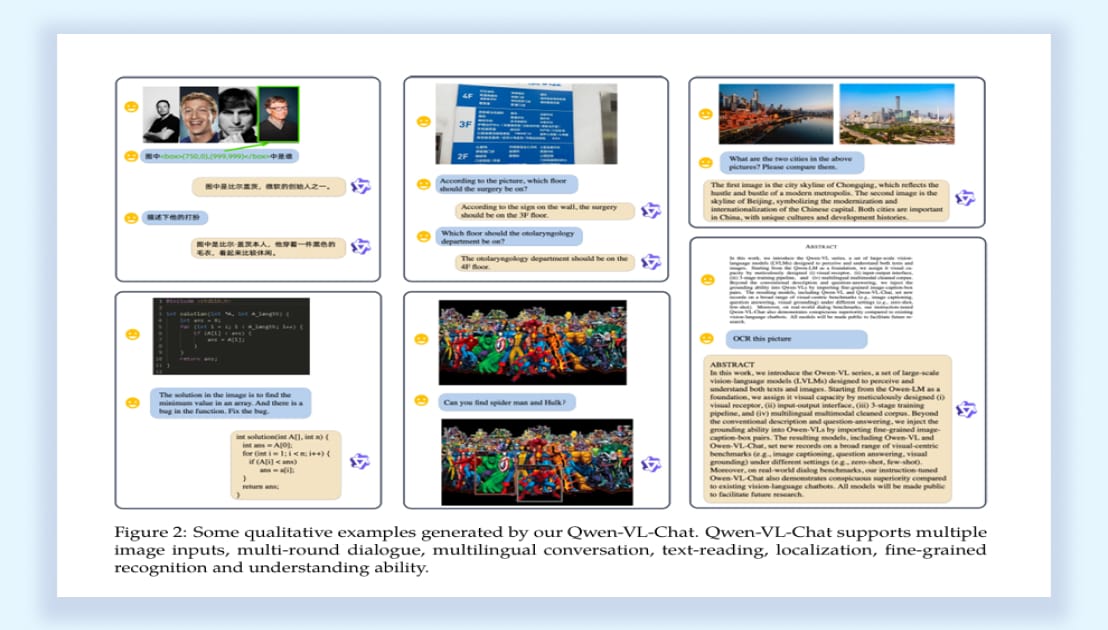

VLM (Vision-Language Models)

VLMs bridge the gap between visual perception and language understanding. For agents operating in the real world or even just navigating GUIs, this multimodal capability is non-negotiable.

How It Works:

Dual Encoders: Separate image and text encoding pipelines

Multimodal Fusion: Joint attention mechanisms across modalities

Unified Output: Text generation conditioned on visual inputs

Training: Contrastive learning + caption generation

The power of VLMs becomes obvious when you need agents to understand screenshots, interpret diagrams, or navigate visual interfaces. Models like GPT-4V, Gemini, and Claude can now "see" and reason about images with remarkable accuracy. For building GUI automation agents or robots that need to understand their environment, VLMs are the foundation.

Best For: Visual question answering, GUI automation, accessibility tools, multimodal agents, document understanding

SLM (Small Language Models)

Not every problem needs a 70B parameter model. SLMs optimize for speed, cost, and deployability crucial when you're running agents on edge devices or need millisecond latency.

How It Works:

Distillation: Learning from larger teacher models

Architecture Optimization: Grouped-query attention, reduced dimensions

Quantization: Lower precision inference

Pruning: Remove redundant parameters

The real magic of SLMs isn't what they sacrifice, it's what they preserve. Through clever distillation and training techniques, models like Phi-3, Gemma, and Mistral 7B deliver surprisingly capable performance at 3-7B parameters. For agents that need to run locally, on mobile devices, or in resource-constrained environments, SLMs are the only viable option.

Best For: Edge deployment, mobile applications, real-time systems, cost-sensitive applications, on-device inference

LAM (Large Action Models)

LAMs represent the evolution from "understanding" to "doing." These models don't just generate text; they generate structured actions that get executed in the real world.

How It Works:

Action-Oriented Training: Fine-tuned on action trajectories

Structured Outputs: JSON, API calls, function parameters

Tool Integration: Native understanding of available tools

Feedback Loops: Learn from action outcomes

This is where things get exciting for practical AI agents. LAMs are trained specifically to understand the tools at their disposal and generate valid, executable actions. Rather than generating natural language that needs parsing, they produce structured outputs ready for execution. Think web navigation, API orchestration, robotic control, and LAMs are purpose-built for agents that need to do things, not just talk about them.

Best For: Web automation, API orchestration, task execution agents, robotic control, workflow automation

HRM (Hierarchical Reasoning Models)

HRMs solve a critical problem in agent architecture: how do you plan both strategically (high-level goals) and tactually (immediate actions) simultaneously?

How It Works:

Dual-Level Architecture: High-level planning + low-level execution

Hierarchical State Encoding: Abstract and concrete representations

Iterative Refinement: High-level plan guides low-level actions

Dynamic Adaptation: Adjust strategy based on execution feedback

The beauty of HRMs is their ability to maintain long-term coherence while remaining adaptive to immediate changes. The high-level planner thinks in terms of goals and strategies, while the low-level executor handles the nitty-gritty details. This hierarchical decomposition mirrors how humans actually plan complex tasks, and it's proving essential for agents tackling long-horizon problems.

Best For: Complex multi-step planning, long-horizon tasks, strategic decision-making, hierarchical workflows

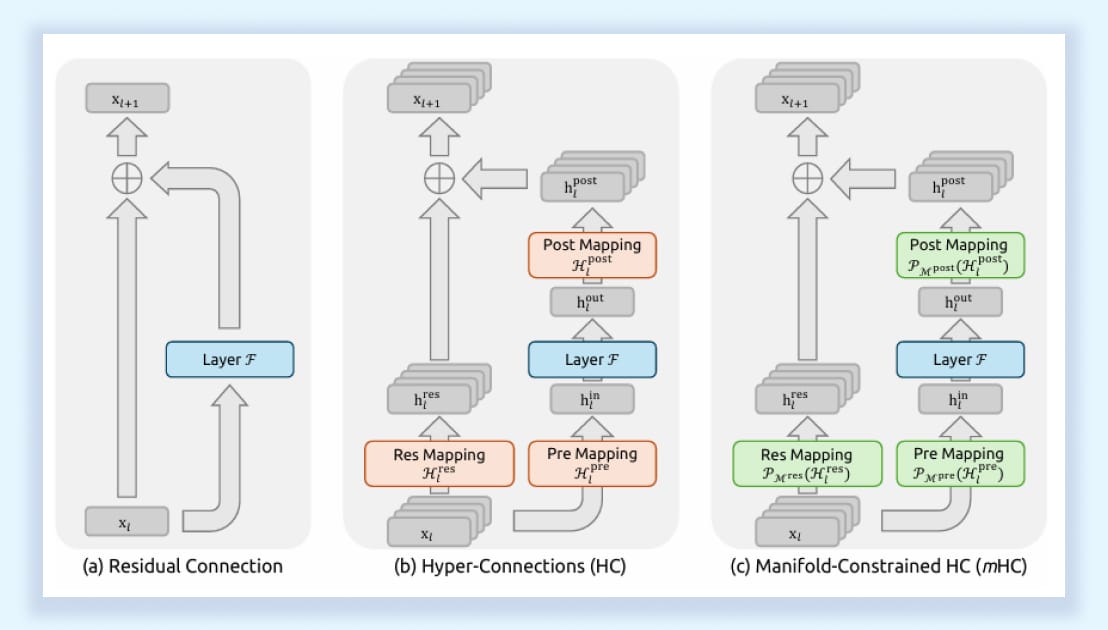

mHC (Manifold-Constrained Hyper-Connections)

Now this is where architectural innovation gets really interesting. mHC isn't about what the model does; it's about how information flows through the model during training and inference.

How It Works:

Enhanced Connectivity: Multiple parallel residual streams instead of one

Manifold Constraints: Geometric constraints ensure training stability

Identity Mapping: Preserves gradient flow in very deep networks

Efficient Implementation: Only 6-7% training overhead

DeepSeek's mHC architecture solves a fundamental problem: as models get deeper, training becomes unstable. Traditional residual connections create a bottleneck; naive widening causes instability. mHC threads the needle by using manifold geometry to maintain stability while increasing the information capacity of inter-layer connections.

Why does this matter for agents? Because it enables more stable training of deeper, more capable models without the typical scaling headaches. DeepSeek demonstrated 2.1-2.3% performance improvements on reasoning tasks with their 27B parameter model—and crucially, the benefits scale with model size.

Best For: Very large models requiring training stability, long-context reasoning, scaling to frontier model sizes

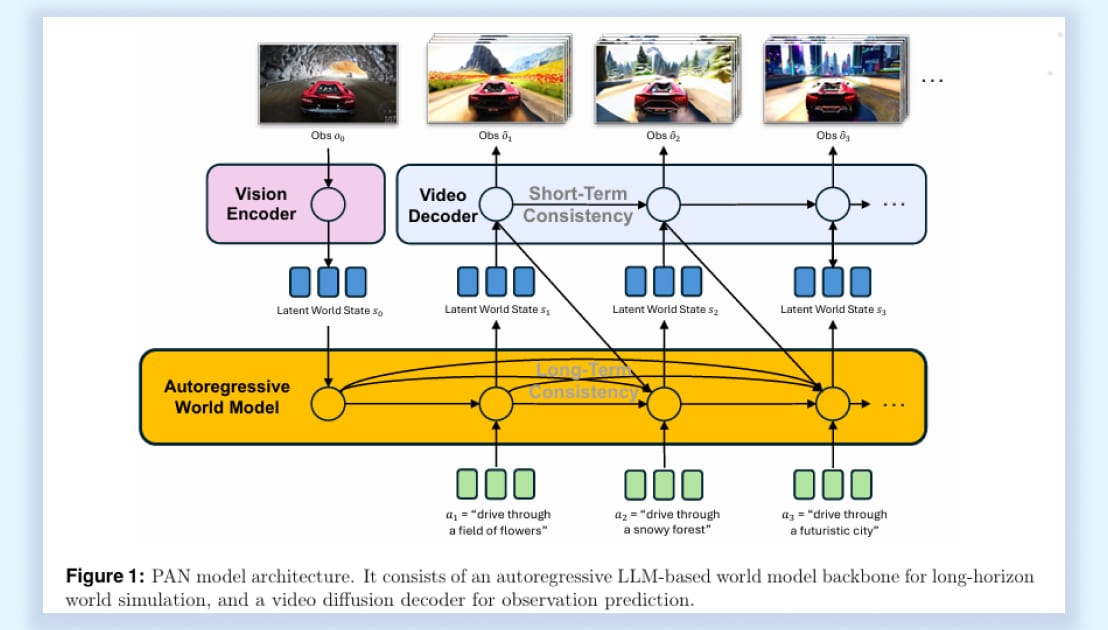

World Models

Here's the paradigm shift that's been brewing: what if AI agents could mentally simulate the consequences of their actions before taking them? That's exactly what World Models enable.

How It Works:

Environment Modeling: Learn physics, causality, and dynamics

Predictive Simulation: Imagine future states given actions

Latent Representations: Compact encoding of world state

Action-Conditioned Prediction: Forecast outcomes of potential actions

World Models mark a fundamental shift away from pure language prediction. Instead of generating the next token, they model how the world itself evolves, making them essential for embodied agents, robotics, autonomous vehicles, and digital twins that must understand dynamics, causality, and physical constraints, not just labels and descriptions.

By enabling simulation, World Models allow agents to reason about “what if” scenarios, plan multi-step actions, and learn from imagined experience. As Yann LeCun notes, LLMs lack grounded world simulation and persistent internal state; World Models directly address this gap by providing the spatial and temporal reasoning modern autonomous systems require.

Best For: Robotics, autonomous vehicles, embodied AI, digital twins, simulation-based planning, physical task execution

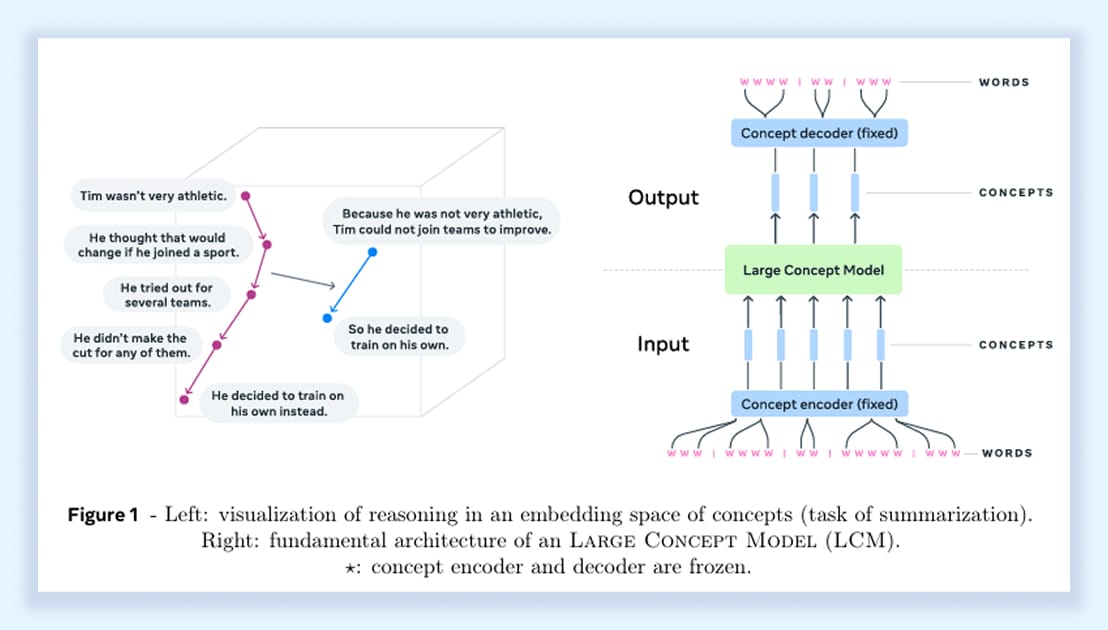

LCM (Large Concept Models)

LCMs represent perhaps the most ambitious architectural direction: moving beyond token-by-token generation to reasoning over abstract concepts and high-level representations.

How It Works:

Concept Embeddings: Representations of abstract ideas beyond tokens

Hierarchical Reasoning: Operate at multiple levels of abstraction

Symbolic Integration: Bridge neural and symbolic reasoning

Compositional Generalization: Recombine concepts in novel ways

The core insight of LCMs is that token-level processing is too granular for true abstract reasoning. By operating on learned concept representations, these models can reason more efficiently about complex, abstract problems. Think of mathematical proofs, scientific reasoning, and strategic planning domains where high-level conceptual manipulation is more important than surface-level language generation.

While still emerging, LCMs point toward a future where models don't just process language but genuinely manipulate abstract ideas. For agents handling complex knowledge work, this architectural direction could be transformative.

Best For: Abstract reasoning, scientific discovery, mathematical proofs, knowledge synthesis, conceptual problem-solving

Practical Takeaway:

GPT – Generate answers and call tools as per input

MoE – Handle high-volume requests with efficient resource usage

LRM – Complex problem-solving with multi-step reasoning

VLM – Computer-using AI agents and visual task automation

SLM – On-device agents with limited computational resources

LAM – Autonomous task execution and workflow automation

HRM – Goal-driven agents needing internal planning without verbose outputs

LCM - Agents requiring high-level conceptual reasoning and coherence across languages and modalities

mHC - Long Horizon agents with better reasoning but higher cost

World Models - Still theoretical, but models that can navigate our real environment

What's Next: The 2025-2026 Trajectory

Models are improving at a rapid pace, and I would suggest you use the same strategy that most AI companies are using: “Don’t depend on a model - solve a use case or a problem.”

In the coming years, these models will keep on improving to a point where there will be a convergence in quality and ability. When we reach that point, we won’t need powerful models but rather a powerful strategy to solve a problem.

Thanks for reading.

— Rakesh