Hi everyone! 👋

This week marks a turning point: we're no longer asking whether to use RAG, we're asking which RAG architecture fits our use case.

As agentic systems mature, one-size-fits-all retrieval is dead. RAG architectures are now splitting into specialized patterns, each optimized for accuracy, reasoning depth, relationship understanding, or speed.

This newsletter breaks down the 10 core RAG architectures shaping 2026 and provides a practical framework for selecting the right one.

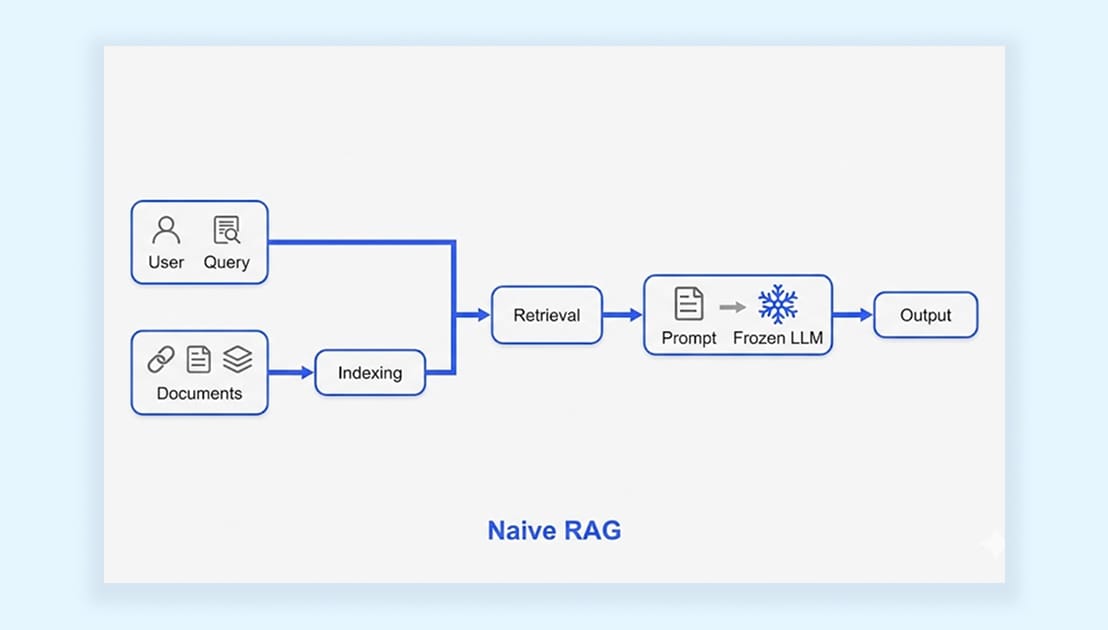

1. Naive RAG

The foundational RAG implementation where queries are embedded into a vector space, matched against document embeddings using cosine similarity, and top chunks are retrieved to augment the LLM's context.

How It Works:

Embedding Generation: Convert queries and documents into dense vector embeddings using models like OpenAI's text-embedding-3 or open-source alternatives

Semantic Search: Perform vector similarity search in the database to retrieve top-k chunks based on cosine similarity scores

Context Augmentation: Combine retrieved context with the original query into a structured prompt template

LLM Generation: Feed the augmented context to the language model for the final response generation pipeline operational within hours

Key Strengths:

Grounds Responses: Revolutionized LLM reliability by anchoring outputs in external knowledge, dramatically reducing hallucinations

Fast Deployment: Simple architecture enables production deployment within hours with minimal infrastructure

Limitations:

Vector Similarity Dependency: Relies entirely on semantic similarity, which doesn't always correlate with actual relevance or answer quality

No Self-Correction: Treats each retrieval as independent and final, with no mechanism to recognize failures or refine the approach

Use Cases:

Internal documentation search and employee knowledge bases

Simple Q&A systems where questions map directly to document content

2. Graph RAG

Builds knowledge graphs from document collections, enabling relationship-aware retrieval by understanding how entities, concepts, and information connect across datasets. Developed by Microsoft Research, it addresses what vector RAG fundamentally cannot: connecting disparate information and understanding holistic semantic concepts across entire corpora.

How It Works:

Graph Construction: LLMs systematically extract entities, relationships, and factual claims from the entire text corpus to build comprehensive knowledge graphs

Community Detection: Machine learning algorithms identify semantic clusters and hierarchies within graphs, generating multi-level summaries from granular facts to high-level themes

Relationship Traversal: Queries traverse relationship paths to find contextually relevant information beyond simple semantic similarity matching

Synthesis: The graph structure itself informs comprehensive answer generation, connecting information across multiple documents and entity relationships

Key Strengths :

Global Understanding: Enables answering questions about themes and patterns invisible to chunk-level similarity search, excels at dataset-wide analysis

Multi-Hop Reasoning: Naturally handles queries requiring relationship traversal across multiple connected entities and concepts

Limitations:

High Upfront Cost: Requires substantial investment in graph construction, sophisticated entity extraction, and community detection algorithms

Complex Indexing: The indexing phase is considerably more expensive than simple embedding generation with hierarchical summary requirements

Use Cases:

Legal documents with case law citations and precedent networks

Scientific research with paper reference networks and citation graphs

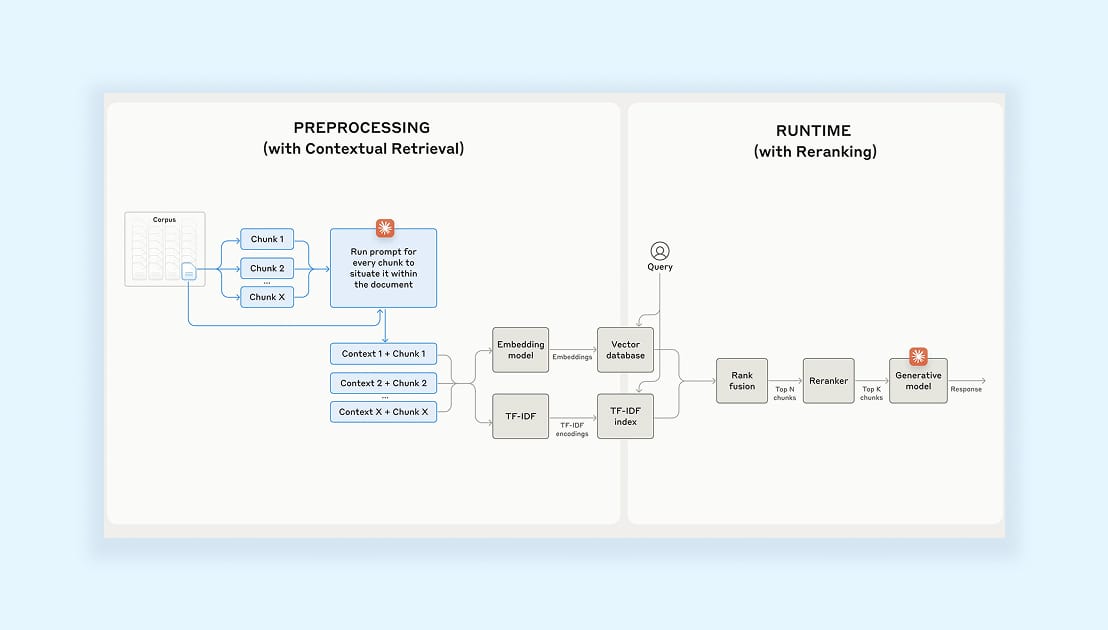

3. Hybrid RAG

A production-grade approach combining multiple retrieval strategies, vector-based semantic search, and lexical keyword search (BM25/TF-IDF) to deliver robust results across diverse query types. Research consistently demonstrates that hybrid pipelines significantly outperform single-method approaches in both precision and recall across real-world query distributions.

How It Works:

Parallel Retrieval: Execute vector search for semantic understanding and keyword search for exact terminology matching simultaneously

Multi-Source Integration: Integrate results from vector databases, traditional search engines, and optionally graph traversal systems in parallel

Intelligent Fusion: Apply ranking and fusion algorithms (RRF, weighted scoring) to merge and re-rank results based on confidence scores

Enriched Context: Provide LLM with context, capturing both semantic meaning and exact terminology for comprehensive answer generation

Key Strengths :

Query Robustness: Vector search excels at semantic matches while keyword search catches exact terminology, dramatically reducing false positives/negatives

Production Standard: Handles mixed query types combining technical and natural language, now standard for enterprise deployments

Limitations:

Infrastructure Overhead: Increased complexity in managing parallel systems, multiple databases, and sophisticated fusion logic

Resource Requirements: Higher computational costs for running multiple retrieval methods and ranking algorithms simultaneously

Use Cases:

Production systems handling mixed query types (technical + natural language)

High-accuracy enterprise applications where retrieval errors are costly

4. HyDE (Hypothetical Document Embeddings)

An approach that searches using what the answer might look like rather than the question itself, bridging the semantic gap by generating hypothetical answers as search queries. This addresses a critical mismatch: questions and their answers inhabit remarkably different regions of embedding space, causing retrieval failures even when relevant documents exist.

How It Works:

Hypothetical Generation: Accept user query and use LLM to generate plausible hypothetical answer (hallucinations acceptable at this stage)

Answer-Space Embedding: Create vector embeddings from hypothetical answers rather than from the original question

Semantic Matching: Search for real documents semantically similar to the hypothetical answer in "answer-space" rather than "question-space."

Final Generation: Use actual retrieved context (not hypothetical) to generate the real answer with grounded information

Key Strengths:

Semantic Gap Solution: Dramatically improves retrieval for complex technical queries where question formulation differs significantly from answer content

Answer-Space Matching: Matches what-good-answers-look-like to actual-good-answers rather than vague questions to precise content

Limitations:

Added Latency: Extra generation call adds 1-2 seconds of latency and effectively doubles token costs for the retrieval phase

Increased Complexity: More moving parts and potential failure points in the retrieval pipeline

Use Cases:

Complex technical queries with significant question-answer semantic gaps

Scenarios where retrieval quality is paramount and justifies added latency/cost

5. Contextual RAG

An architecture ensuring every document chunk carries surrounding contextual scaffolding by augmenting chunks with document-level, section-level, and positional metadata to prevent information loss during chunking. Traditional fixed-size chunking breaks documents apart, losing the broader contextual framework that gives those pieces their actual meaning and creates ambiguous pronoun references.

How It Works:

Contextual Analysis: LLMs analyze each chunk's position within the broader document structure and understand its semantic role

Context Enrichment: Add document-level context, section-level, and positional metadata

Context-Aware Embeddings: Create vector embeddings encoding not just content but contextual position and semantic role

Preserved Context Retrieval: Retrieve chunks while maintaining full understanding of their original placement and meaning in source documents

Key Strengths:

Disambiguation: Solves ambiguous references ("the system," "this approach") by enriching chunks with contextual scaffolding from the original document

Cross-Reference Handling: Excels with long documents where pronouns and references span multiple pages or sections

Limitations:

Storage Overhead: Increased storage requirements for contextual metadata attached to every chunk

Pipeline Complexity: Moderate complexity in context enrichment pipeline requiring LLM-powered analysis during indexing

Use Cases:

Regulatory compliance systems requiring absolute precision and disambiguation

Medical records with complex patient histories and temporal references

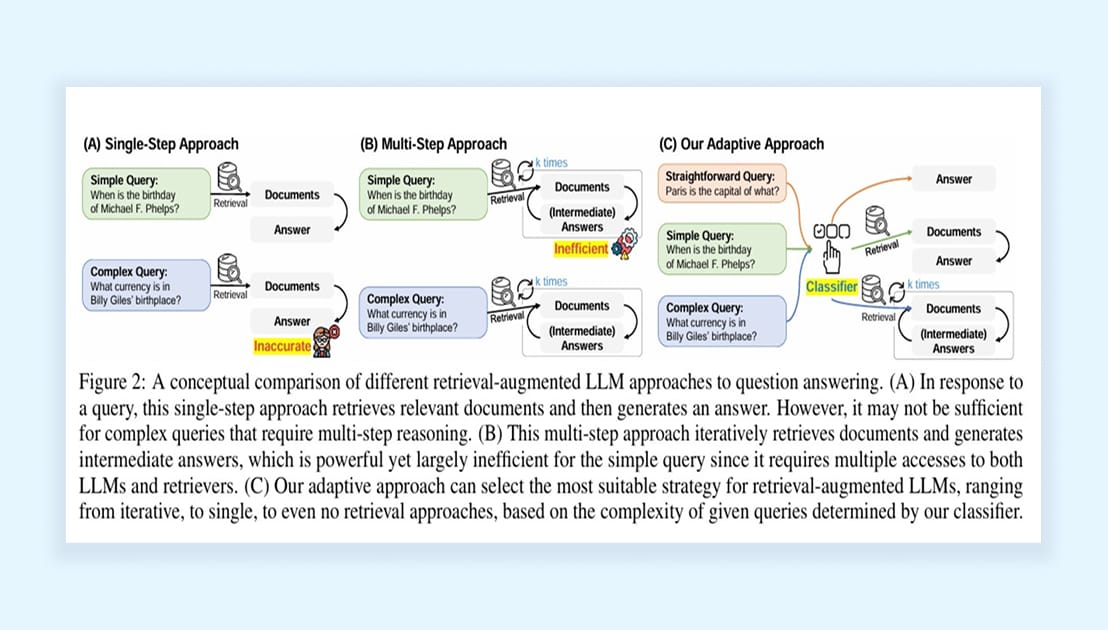

6. Adaptive RAG

An intelligent system that analyzes each query's complexity and dynamically selects the optimal retrieval strategy, matching computational investment to actual requirements rather than forcing all queries through identical pipelines. This acknowledges that production queries are profoundly heterogeneous, ranging from simple lookups to complex analytical research requiring multi-step reasoning.

How It Works:

Query Classification: Sophisticated classifiers analyze complexity, semantic type, and domain requirements using embedding classification, pattern matching, or LLM analysis

Dynamic Strategy Selection: Choose between single-step direct retrieval for simple queries or multi-step iterative reasoning for complex analytical questions

Resource Optimization: Simple queries ("What is our refund policy?") route to fast paths (200ms) while complex queries engage sophisticated workflows

Strategy-Optimized Retrieval: Gather context using techniques specifically optimized for the chosen complexity tier and query type

Key Strengths:

Resource Efficiency: Eliminates waste where simple queries over-engineer and complex queries underserve, intelligently allocates resources

Optimal Trade-offs: Balances latency (for simple queries) and accuracy (for complex queries) based on actual needs

Limitations:

Classification Infrastructure: Requires a sophisticated query classification system and multiple retrieval paths to maintain

Routing Complexity: Complex routing logic managing different strategies and potential misclassification risks

Use Cases:

Applications with highly variable complexity requiring both speed and depth

Cost-optimization initiatives at enterprise scale where resource efficiency matters

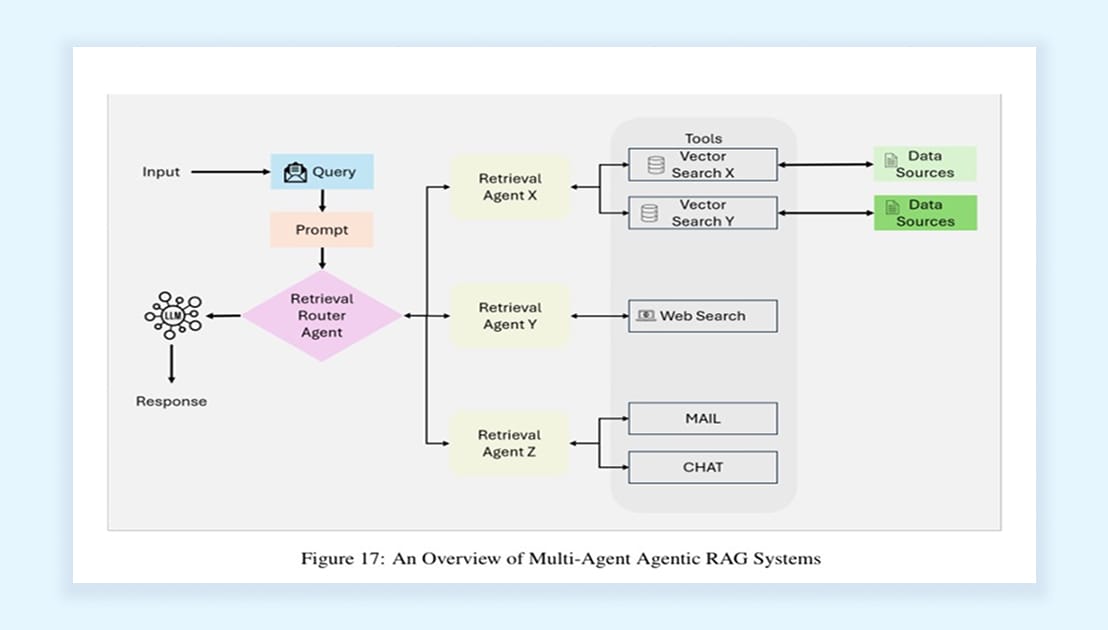

7. Agentic RAG

The most sophisticated evolution where RAG transcends simple retrieval-generation to become genuine autonomous intelligence, orchestrating multiple specialized agents, diverse tools, and heterogeneous data sources to handle complex multi-step workflows requiring true reasoning. This represents RAG evolving into autonomous decision-making systems capable of planning, executing, and refining strategies across complex task landscapes.

How It Works:

Agent Orchestration: Central orchestrator using ReACT (Reasoning + Acting) or Chain-of-Thought planning decomposes complex tasks into actionable steps

Memory Systems: Short-term memory maintains conversation context and task state; long-term memory stores historical patterns and learned behaviors

Multi-Agent Coordination: Specialized agents handle local data (documents, SQL, files), external intelligence (web, APIs), and cloud integrations (AWS, Azure, GCP)

Iterative Refinement: System actively reasons, takes actions in external systems, and iteratively refines searches until achieving optimal quality

Key Strengths:

Autonomous Intelligence: Doesn't merely retrieve, but actively thinks, plans strategically, takes actions, and refines iteratively with full reasoning capability

Complex Workflows: Orchestrates sophisticated multi-source research: query databases, retrieve logs, search news, cross-reference tickets, synthesize findings

Limitations:

High Complexity: Complex debugging, substantial computational overhead, and sophisticated agent orchestration infrastructure required

Unpredictable Costs: Multiple LLM calls, tool invocations, and iterative refinement can make costs and latency unpredictable

Use Cases:

Complex enterprise workflows requiring cross-system coordination and multi-source synthesis

Autonomous research and strategic decision support systems

Financial analysis platforms conducting multi-stage investment research

8. Self-RAG (Self-Reflective RAG)

A self-correcting architecture that doesn't blindly trust its own retrieval and generation, instead, it actively critiques and refines its outputs through learned reflection tokens. Self-RAG introduces on-demand retrieval decisions and multi-stage self-evaluation, determining when retrieval is necessary, assessing retrieved relevance, and validating generated responses for factual accuracy before delivering answers.

How It Works:

Adaptive Retrieval Decisions: Model learns to predict when external retrieval is actually needed versus answering from parametric knowledge alone

Retrieval Evaluation: Special "critic" tokens assess whether retrieved passages are relevant to the query before proceeding to generation

Generation with Reflection: The system generates candidate answers while simultaneously producing self-assessment tokens evaluating factual support

Iterative Refinement: If self-critique detects low confidence or factual inconsistency, the system retrieves additional context or regenerates responses

Key Strengths:

Self-Correction: Actively identifies and corrects its own retrieval failures and hallucinations through learned reflection mechanisms

Retrieval Efficiency: Only retrieves when necessary saves costs on queries answerable from model knowledge without external context

Limitations:

Training Complexity: Requires specialized training with reflection tokens and critique data that can't simply plug into existing RAG pipelines

Latency Overhead: Multiple reflection and evaluation steps add computational cost and response time compared to single-pass generation

Use Cases:

High-stakes applications where factual accuracy is critical (medical, legal, financial)

Systems requiring explainable confidence scores and self-assessment

9. Modular RAG

A flexible architecture treating RAG as composable building blocks rather than a fixed pipeline, enabling teams to mix and match specialized modules for indexing, retrieval, generation, and orchestration based on evolving requirements. Modular RAG acknowledges that production systems need adaptability, the ability to swap retrieval strategies, add new data sources, or upgrade components without rebuilding entire pipelines.

How It Works:

Modular Indexing: Independent modules handle chunking strategies, embedding models, metadata extraction, and index construction, swappable without downstream changes

Pluggable Retrieval: Separate retrieval modules (vector search, keyword search, graph traversal, SQL queries) operate as interchangeable components

Flexible Generation: Generation modules can be upgraded (different LLMs, prompt strategies, output formats) without touching the retrieval infrastructure

Orchestration Layer: Central coordinator manages data flow between modules, enabling complex workflows like pre-retrieval query rewriting and post-retrieval reranking

Key Strengths:

Adaptability: Swap embedding models, add new retrieval methods, or upgrade LLMs without architectural rewrites, critical for the fast-moving AI landscape

Experimentation-Friendly: A/B test different retrieval strategies, chunking approaches, or ranking algorithms independently on production traffic

Limitations:

Engineering Overhead: Requires well-defined interfaces, version compatibility management, and more sophisticated orchestration logic

Integration Complexity: More moving parts mean more potential integration issues and debugging challenges across module boundaries

Use Cases:

Production systems requiring frequent experimentation and component upgrades

Enterprise platforms serving multiple use cases with different retrieval needs

10. Agentic Graph RAG

The convergence of three powerful paradigms: autonomous agent reasoning, structured knowledge graphs, and retrieval augmentation, creates systems that don't just traverse graphs but strategically explore them using agent-driven planning. Unlike static Graph RAG, Agentic Graph RAG employs intelligent agents that formulate exploration strategies, decide which graph paths to follow, and dynamically adjust their search based on intermediate findings.

How It Works:

Agent-Driven Graph Exploration: Autonomous agents analyze queries and formulate strategic graph traversal plans rather than executing predefined paths

Dynamic Path Selection: Agents decide which entities, relationships, and graph neighborhoods to explore based on relevance signals and reasoning chains

Multi-Hop Reasoning: Intelligently traverse complex relationship paths across multiple hops, backtracking or branching based on information quality

Synthesis and Validation: Agents collect information across graph traversals, synthesize findings from multiple paths, and validate consistency before answer generation

Key Strengths & Limitations:

Strategic Exploration: Doesn't blindly follow all graph paths, intelligently prioritizes exploration based on query understanding and intermediate findings

Complex Reasoning: Handles sophisticated multi-hop questions requiring strategic graph navigation and cross-referencing multiple entity relationships

Limitations:

Computational Intensity: Agent planning, multiple graph queries, and iterative exploration create significant computational overhead

Unpredictable Costs: Agent-driven exploration can lead to variable numbers of graph queries and LLM calls, making cost and latency unpredictable

Use Cases:

Complex investigative queries requiring strategic exploration of relationship networks

Financial fraud detection following transaction chains and entity ownership structures

What's Next: The 2026-2027 Trajectory

The retrieval landscape is fragmenting, and that's the point. RAG is not a single solution that fits all use cases, but rather a selective approach where the best frameworks are suited for their respective use cases.

While the Agentic systems have moved from research to enterprise, building a capable agentic RAG system still depends on the foundational practises.

But LLMs are now coming with a massive context window (>1M)? Will they replace RAGs?

The counterintuitive reality: 1M+ token windows won't replace RAG. They make targeted retrieval more valuable. Throwing knowledge bases into context is wasteful and less accurate than strategic retrieval. RAGs make sure that won’t happen.

This is because coding agents and Copilot-style assistants have been using embedding similarity to retrieve related code and docs.

However, the change is now being introduced, where terminal agents are using grep/regex search to do faster retrieval, and we’ll talk more about this in our next newsletter.

Additional Resources🙂

OpenAI RAG Best Practices: https://platform.openai.com/docs/guides/retrieval-augmented-generation

LangChain RAG Tutorials: https://python.langchain.com/docs/tutorials/rag/

LlamaIndex Documentation: https://docs.llamaindex.ai/

Hugging Face RAG Guide: https://huggingface.co/docs/transformers/model_doc/rag

Vector Databases:

Pinecone: https://www.pinecone.io/

Weaviate: https://weaviate.io/

Qdrant: https://qdrant.tech/

Chroma: https://www.trychroma.com/

Evaluation Frameworks:

RAGAS: https://docs.ragas.io/

TruLens: https://www.trulens.org/

DeepEval: https://docs.confident-ai.com/

Thanks for reading.

— Rakesh’s Newsletter