Hey there 👋

Last week I went again in the internet searching for how production-grade AI agents are implemented by reviewing engineering blogs, case studies, and system design docs from teams operating at scale.

Here’s what I found after reviewing these systems in production.👇

American Express: IT Support & Travel Agents

What they built: Conversational AI agents that handle IT support tickets and travel assistance requests.

How they did it:

Trained on historical support interactions and company policies

Integrated with existing ticketing systems and travel databases

Built escalation logic to route complex cases to humans

What matters: 40% fewer IT escalations and an 85% increase in travel assistance efficiency. The win wasn't about handling every case but about filtering out the noise so experts could focus on what truly required their expertise.

Uber: Finch for Financial Data

What they built: A natural language to SQL agent called Finch that lets finance teams query data without writing code.

How they did it:

LLM translates business questions into SQL queries

Connected to Uber's data warehouse

Added guardrails and query validation for safety

What matters: Eliminated dozens of hours per week of manual SQL writing. Finance people now get answers instantly instead of waiting for data engineers.

Anthropic: Multi-Agent Research System

What they built: A coordinated system where specialized agents collaborate on research tasks: one plans, one searches, one reads, one synthesizes.

How they did it:

Each sub-agent handles a specific capability

The orchestration layer coordinates the workflow

Built with Claude models for reasoning and tool use

What matters: 90.2% performance improvement and 40% faster completion on research tasks. Breaking complex work into specialized agents beats the single-agent approach.

Dropbox: Dash for Universal Search

What they built: A multi-step RAG agent that searches across 50+ connected apps and platforms.

How they did it:

Semantic search across all data sources

Claude for natural language understanding

Heavy optimization for speed

What matters: Sub-2-second response times on 95%+ of queries. Speed wasn't a nice-to-have; it was the entire product. Nobody uses a search tool that takes 10 seconds to think.

Airtable: Field Agents for Automation

What they built: Agents that automate workflows through natural language instructions, no code required.

How they did it:

Natural language interface for defining automation rules

Agents read, write, and manipulate Airtable data

Integration with their database and API ecosystem

What matters: 100+ hours of work automated in seconds. Business users became automation creators without needing developers.

Salesforce: Text-to-SQL Agents

What they built: An agent that translates natural language questions into SQL queries against Salesforce data.

How they did it:

LLM generates SQL from business questions

Implemented data security and access controls

Query validation before execution

What matters: Self-serve answers in minutes vs. waiting dozens of hours for analyst support. Reduced the bottleneck on routine data requests.

Intercom: Voice Support Agents

What they built: Voice AI agents that handle customer support phone calls.

How they did it:

Natural language understanding for voice

Integration with knowledge bases and CRM

Seamless handoff to humans when needed

What matters: 56% resolution rate + 5x cost savings per call. The economics work because agents handle volume while humans handle complexity.

DoorDash: Testing & Support Agents

What they built: AI agents for automated testing and customer support on AWS Bedrock.

How they did it:

Agents simulate user flows for testing

Support agents handle order tracking and common issues

Integration with existing infrastructure

What matters: 50x testing capacity increase + 49% fewer transfers to human support + 2.5s response latency. Scaled two bottlenecks simultaneously.

Meta & Aitomatic: Domain-Expert Agents for IC Design

What they built: Domain-Expert Agents (DXA) that captured decades of specialized knowledge for integrated circuit field engineers.

How they did it:

Used Llama 3.1 70B fine-tuned on domain data

Three-phase approach: Capture (documents, expert knowledge) → Train (augment with synthetic scenarios) → Apply (deploy with connected resources)

Neuro-symbolic AI combining neural networks with expert rules

On-premises deployment to protect IP

What matters: 3x faster issue resolution + 75% first-attempt success rate (vs. 15-20% from generic AI). The key was capturing and scaling expert knowledge, not just having a good model. Open-source Llama gave them control over sensitive company data.

Shopify: Product Attribute Extraction

What they built: Multimodal agents that extract product attributes from images to improve listings and search.

How they did it:

Fine-tuned Llama 2 7B-based LLaVA model on product images

Two agents: real-time merchant assistant + offline catalog optimizer

Used QLoRA for efficient training, then full-precision for accuracy

Deployed on 100 NVIDIA A100 GPUs with LM deploy

Process over 10,000 metadata categories

What matters: Tens of billions of tokens processed daily. They reduced the number of parameters from 13B to 7B, not for accuracy but for cost; open-source made massive-scale inference economically viable. Improved merchant experience, better SEO, and conversational search for customers.

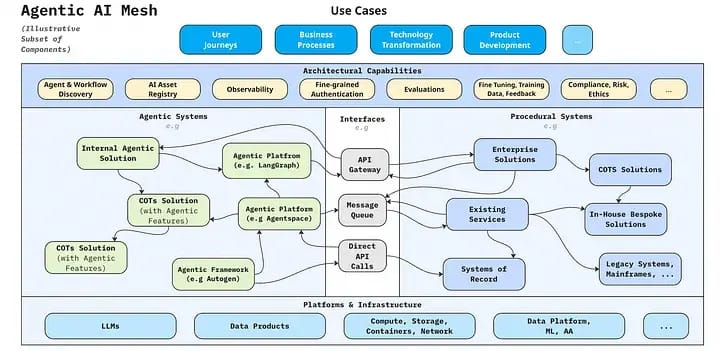

McKinsey: Agentic AI Mesh Architecture

What they built: Enterprise architecture for deploying Gen AI agents across 1000+ teams at scale.

How they did it:

Centralized governance, decentralized execution

Modular agent components that compose for different use cases

Standardized deployment patterns and monitoring

What matters: Deployment time went from months to days. The architecture is the Product: It's how you scale agents across an enterprise without chaos.

LinkedIn: Hiring Assistant

What they built: An agent that automates recruiting tasks, candidate sourcing, screening, and outreach.

How they did it:

Built on LinkedIn's Gen AI tech stack

Natural language interface for recruiter instructions

Integration with LinkedIn's professional network data

What matters: 73% of recruiters save 1+ hour per role, + 20x sourcing efficiency,y + 20% revenue increase. Time saved on admin = more time building relationships with candidates.

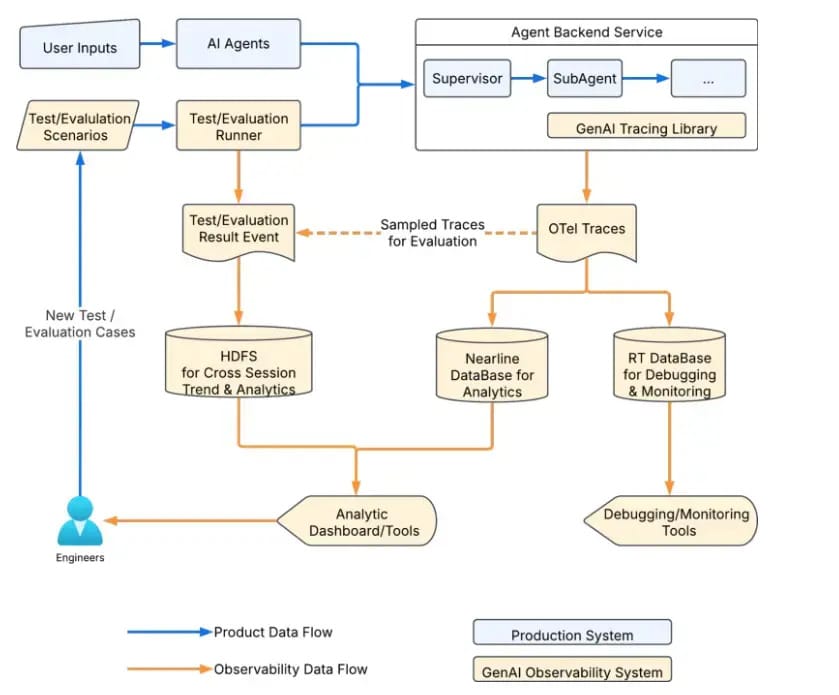

LinkedIn: Gen AI Tech Stack for Agents

What they built: The infrastructure layer supporting all their AI agents and applications.

The architecture:

Foundation models layer (mix of open-source and proprietary)

Agent framework for building, testing, and deploying

Orchestration layer for workflow management

Real-time data infrastructure (LinkedIn's professional graph)

Safety and governance systems

What matters: modularity for rapid experimentation, horizontal scalability, and built-in observability. The stack is designed for iteration speed without breaking reliability.

BlackRock: Portfolio Management Agents

What they built: AI agents that analyze market data and provide portfolio recommendations.

How they did it:

Agents process market data, financial reports, and economic indicators

Integration with proprietary data and models

Augments human decision-making with AI insights

What matters: Agents outperform most humans in specific portfolio cases. Faster analysis, more consistent strategy application, better risk assessment.

Vercel: Developer Tool Agents

What they built: AI agents integrated into their development platform for code generation, debugging, and deployment.

How they did it:

Natural language interface for dev tasks

Context-aware based on project structure

Designed for agent-human collaboration

What matters: Their learnings: start narrow, invest in evaluation, design for collaboration, not replacement, iterate on real feedback. Build for developers, not demos.

Thanks for reading. — Rakesh’s Newsletter