Hi everyone 👋

Welcome back to this week's AI Agent updates. Wall Street just went all-in on AI agents. Goldman Sachs partnered with Anthropic to automate its back office, while coding tools reached new performance milestones, prompting developers to rethink their workflows.

Meanwhile, the business model wars continue. OpenAI doubles down on ads, Harvard drops a truth bomb about AI workloads, and a new wave of infrastructure is making agents faster, smarter, and more practical than ever.

Goldman Sachs Partners with Anthropic for In-House AI Agents

What's Happening: Goldman Sachs is deploying Claude AI agents to automate high-volume back-office drudgery, think millions of transaction reconciliations, KYC/AML compliance checks, and regulatory document reviews. Anthropic engineers are embedded onsite for a 6-month deep collaboration, co-building agents on Claude's latest models designed for step-by-step reasoning on complex financial data.

Report Includes:

AI handles millions of transaction reconciliations and client onboarding processes

Anthropic engineers working inside Goldman for 6 months to co-build custom agents

Built on Claude's reasoning capabilities for multi-step compliance workflows

Why It Matters: When a Wall Street giant commits engineers and resources to AI agents for compliance and operations, it's a clear signal that agentic AI has crossed from experimental to mission-critical. This isn't about flashy demos, it's about automating the tedious, error-prone work that costs banks millions in human hours and regulatory risks.

Google Starts Rolling Out WebMCP for Early Preview

What's Happening: Google has started rolling out WebMCP (Web Model Context Protocol) for early preview, enabling AI agents to interact directly with web applications through a standardized protocol. This allows agents to navigate, read, and perform actions on websites just like humans do, without custom integrations or fragile screen scraping.

Report Includes:

Standardized protocol for AI agents to interact with any web application

Enables automated workflows across websites without breaking

Early preview targeting enterprise customers first

Why It Matters: WebMCP transforms the web from "human-readable" to "agent-readable." When agents can interact with any website through a standard protocol instead of fragile scraping, it unlocks enterprise automation at scale—agents booking travel, managing SaaS tools, or executing multi-site workflows that actually work in production.

MiniMax Introduces Their New Frontier Model M2.5

What's Happening: MiniMax dropped M2.5, their new frontier model built specifically for complex agent workflows and multimodal reasoning. The model features enhanced planning capabilities, native tool use, and extended context windows designed to handle enterprise-grade autonomous tasks with improved reliability.

Report Includes:

Purpose-built for complex agent workflows and autonomous planning

Enhanced multimodal reasoning with native tool integration

Extended context windows for long-running enterprise tasks

Why It Matters: MiniMax is joining the frontier model race with an agent-first strategy. While others optimize for chatbots, M2.5 is purpose-built for production workflows—long planning horizons, reliable tool execution, and context retention. "Agent-native" models are officially a distinct category.

Google Starts Rolling Out UCP for US Users

What's Happening: Google has begun rolling out UCP (Universal Commerce Platform) for US users to test its integration with the Gemini shopping experience. The platform offers conversational AI ads with instant "Direct Offers" that boost conversions by 20-30%, along with AI agents that compare prices and make purchase decisions autonomously.

Report Includes:

Conversational ads with "Direct Offers" increase conversions by 20-30%

Agentic shopping, where AI agents compare prices and decide purchases

AI-powered creator pairing that matches brands with influencers automatically

Why It Matters: Google is building the infrastructure for AI agents to become shoppers, not just assistants. This shifts e-commerce from "help me find" to "buy this for me," and advertisers need to adapt to a world where their customer might be an algorithm optimizing for price and relevance, not emotions.

ByteDance Seedance 2.0 Shocks the World with Its Realism

What's Happening: ByteDance dropped Seedance 2.0, their next-gen AI video model that's exploding in China for hyper-real cinematic clips. It nails complex motions like pair figure skating with real physics, no more glitchy AI fails, and offers director-level control by mixing up to 9 images, 3 videos, 3 audios, plus text prompts.

Report Includes:

Handles complex multi-character interactions with realistic physics

Multimodal input: 9 images, 3 videos, 3 audios + text for precise control

Matches camera moves, storyboards, and seamless editing capabilities

Why It Matters: This isn't just another AI video tool; it's production-grade content creation hitting state-of-the-art usability. When AI can handle the physics of two people ice skating together without artifacts, we're entering a new phase where AI-generated video becomes indistinguishable from filmed content.

Moonshot AI Introduces Kimi Agent Swarm

What's Happening: Kimi's Agent Swarm is a game-changer in Moonshot AI's K2.5 model, letting it auto-spin up agent teams for massive tasks. From one prompt, it self-directs up to 100 sub-agents with no manual setup, handling 1,500+ parallel tool calls for research, coding, or data crunching.

Report Includes:

Automatically deploys up to 100 sub-agents from a single prompt

Executes 1,500+ parallel tool calls without manual orchestration

Trained via PARL to avoid "serial collapse" and force true parallelism

Why It Matters: Most AI agents work sequentially, one task at a time. Agent Swarm breaks that bottleneck by spawning entire teams that work in parallel, turning hours-long research projects into minutes. This is the architecture shift that makes AI agents practical for enterprise-scale workflows.

OpenAI Releases GPT-5.3-Codex-Spark for Fast Coding

What's Happening: GPT-5.3-Codex-Spark is OpenAI's new ultra-fast AI for real-time coding, launched in research preview. It hits over 1000 tokens/second 15x faster than GPT-5.3-Codex using Cerebras Wafer Scale Engine 3 for sub-second latency, with a 128k context window for quick refactors and UI tweaks.

Report Includes:

Blazing speed at 1000+ tokens/second, 15x faster than standard Codex

128k context window optimized for interactive coding workflows

Runs on Cerebras hardware for sub-second latency on complex tasks

Why It Matters: Speed changes everything in coding AI. When you can see code generated in real-time, AI shifts from "batch processor" to "pair programmer," enabling interruptions, course corrections, and live collaboration that wasn't possible with slower models.

OpenAI Finally Starts Rolling Out Ads in ChatGPT

What's Happening: OpenAI has kicked off ads in ChatGPT for free US users. Bottom-of-response sponsored ads appear for free/Go tiers only (paid stays clean), with no chat data sold, just aggregated topics. The move subsidizes 90% free users amid massive compute costs, while skipping health/politics and not tweaking answers.

Report Includes:

Ads appear at the bottom of responses for the free tier only

No individual chat data sold; uses aggregated topic data only

Subsidizes computer costs for 90% of users who don't pay

Why It Matters: This is the moment the AI business model war goes public. OpenAI is betting ads can fund free access at scale, while competitors like Anthropic position themselves as "ad-free and unbiased." Expect this to become a major differentiation point as AI becomes essential infrastructure.

Coinbase Releases Agent Wallet for Agentic Apps

What's Happening: Coinbase's Agentic Wallet lets AI agents independently hold USDC, trade tokens, and transact on-chain without human oversight. Agents get their own self-custody wallets on Base (Coinbase's L2), authenticating via email OTP with no private keys exposed to the AI, plus gasless trading and plug-and-play "skills."

Report Includes:

AI agents get standalone wallets on Base with email OTP authentication

Gasless token swaps and plug-and-play skills for trading and yield

Enables autonomous on-chain transactions without human intervention

Why It Matters: When AI agents can hold and move money autonomously, they become economic actors, not just tools. This unlocks use cases like autonomous trading bots, agent-to-agent payments, and machine-to-machine transactions, a foundational piece of the agent economy.

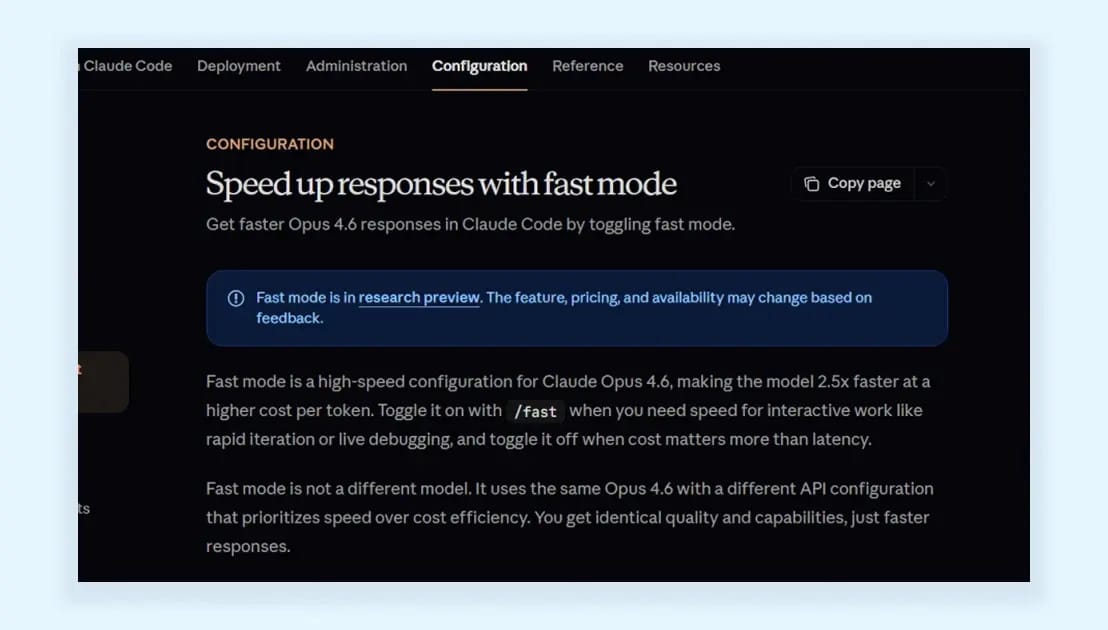

Anthropic Introduces Fast Mode for Claude Code

What's Happening: Claude 4.6 Fast Mode cranks up output speed on the Opus 4.6 model by 2.5x without touching smarts or quality. It slashes wait times on long generations like code reviews or refactors, turning AI into a real-time pair programmer and perfect for latency-sensitive agents churning detailed plans.

Report Includes:

2.5x faster output speed without quality degradation

Optimized for long code reviews, refactors, and agent workflows

Shaves seconds per response for more responsive automation

Why It Matters: In agentic workflows, latency kills productivity. Fast Mode makes Claude feel instant, even on complex tasks, which is the difference between agents that feel sluggish and agents that feel like teammates. Speed is a feature.

Zhipu AI Releases Its New Frontier Model GLM-5

What's Happening: Zhipu AI dropped GLM-5, a massive Chinese LLM shaking up the global AI scene. It features 745B total params (44B active via MoE with 256 experts), doubled from GLM-4's 355B, trained on 28.5T tokens. Built for "agentic engineering" with autonomous planning, tool use, and multi-step workflows that rival Claude Opus 4.5.

Report Includes:

745B parameters with 44B active through a mixture-of-experts architecture

Trained on 28.5 trillion tokens for frontier-level reasoning

Built specifically for agentic workflows with autonomous planning capabilities

Why It Matters: China is no longer playing catch-up in AI; they're setting the pace. GLM-5's agentic focus and competitive benchmarks against Western models signal that the global AI race is truly global, and specialized agent models are becoming the new frontier.

Cloudflare Introduces Markdown for Agents

What's Happening: Cloudflare's Markdown for Agents converts HTML pages to clean Markdown on-the-fly for AI requests, slashing token waste and boosting agent efficiency. HTML bloats AI inputs with nav bars and junk. Markdown strips it to essentials, cutting tokens by 50-80%, with agents just adding an Accept: text/markdown header.

Report Includes:

Cuts token usage by 50-80% by stripping HTML bloat

Real-time edge conversion with a simple header change

No client-side processing is required at the network level

Why It Matters: Token efficiency isn’t the best, but it’s about economics. When agents scrape thousands of pages, cutting token costs in half makes previously impractical workflows suddenly viable. Infrastructure improvements like this are what turn agent prototypes into production systems.

Exa AI Search Releases Exa Instant for AI Workflows

What's Happening: Exa Instant is Exa's latest ultra-fast search API upgrade, clocking in under 350ms latency for real-time AI needs. It's 30% quicker than rivals at <350ms p50, perfect for voice AI, news feeds, or live code gen, with neural semantic search to cut noise and pull exact knowledge.

Report Includes:

Under 350ms latency, 30% faster than competing APIs

Neural semantic search filters out noise and listicles

Optimized for real-time AI applications like voice assistants

Why It Matters: Search latency is the bottleneck in real-time AI agents. When voice AI or live coding tools need information NOW, 350ms vs 700ms is the difference between feeling natural and feeling broken. Exa is building the infrastructure layer that makes real-time agents possible.

What's Happening: Harvard Business Review's recent study busts the myth that AI lightens workloads it actually ramps them up. Workers grabbed extra roles (designers coding, PMs engineering) since AI filled knowledge gaps, expanding scope without cuts elsewhere, while AI sped up tasks but created a cycle of higher expectations.

Report Includes:

Workers took on additional roles outside their expertise using AI

Speed improvements led to higher expectations, not reduced workloads

Created dependency cycles where more AI use leads to more work

Why It Matters: This is the uncomfortable truth about AI productivity gains: they don't reduce work; they change expectations. Instead of doing the same job faster, people do MORE jobs. Companies need to recognize this dynamic and consciously decide whether AI expands output or creates breathing room.

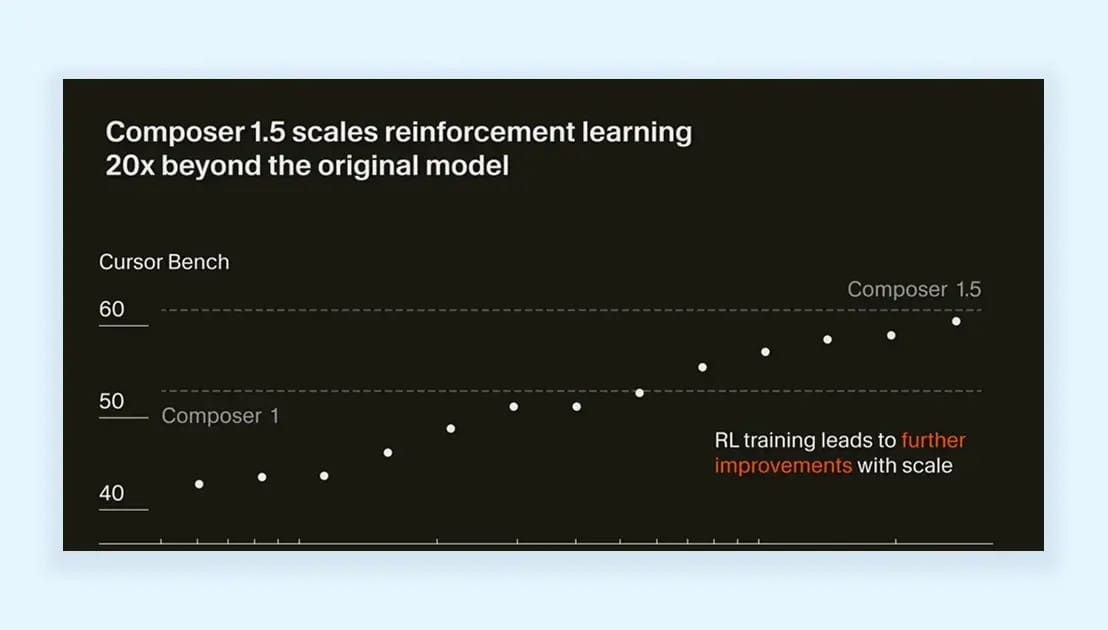

Cursor AI Releases Its New Coding Model Composer 1.5

What's Happening: Composer 1.5 is Cursor's upgraded agentic AI coding model, excelling at real-world dev tasks by balancing speed and smarts. It features 20x RL scaling trained with massive reinforcement learning that outspends even pretraining compute plus adaptive "thinking tokens" that reason over your codebase, going quick for easy fixes and deep for complex ones.

Report Includes:

20x reinforcement learning scaling for massive coding improvements

Adaptive thinking tokens that adjust reasoning depth to task complexity

Balances speed for simple tasks with deep analysis for complex problems

Why It Matters: This is the first coding model where RL compute exceeded pretraining compute, a signal that post-training optimization is becoming more important than raw scale. Composer 1.5 shows that smarter training, not just bigger models, is the path to better coding agents.

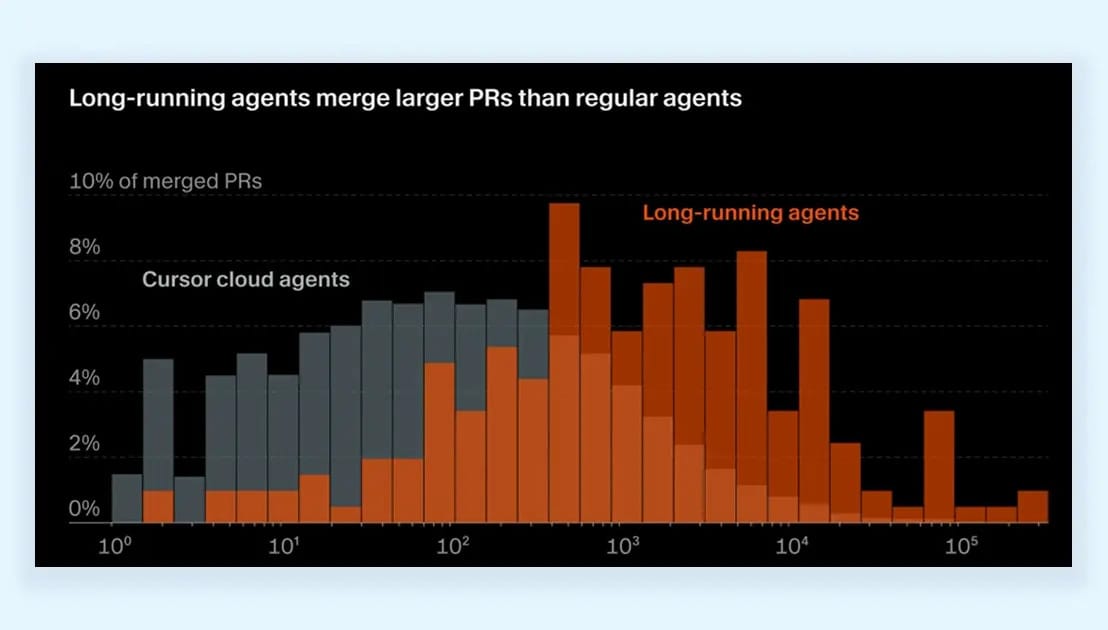

Cursor AI's Long-Running Agents Are Now Available for Beta

What's Happening: Cursor's long-running agents research preview has expanded to all Ultra, Teams, and Enterprise users. These agents handle massive projects autonomously, running 24-50+ hours on tasks like building chat platforms (36h) or RBAC refactors (25h), with a custom harness that uses planning approval and multi-agent checks to fix model failures.

Report Includes:

Agents run 24-50+ hours autonomously on complex projects

Custom architecture prevents plan drift and task abandonment

Users report compressing quarter-long projects into days

Why It Matters: This is what "agents doing real work" actually looks like not chatbots, but systems that run for days without human intervention. The fact that users are shipping 151k-line PRs from agent work proves we've crossed from "impressive demo" to "production workflow."

Thanks for reading.

See you next week with more AI agent updates.

— Rakesh’s Newsletter