Hi everyone 👋

Welcome back to AI Agent Weekly. This was the most consequential week in AI agent history. Anthropic accidentally leaked Claude Code's entire source code to the public. Google dropped the first open model that runs AI agents fully offline. Apple ended ChatGPT's exclusive Siri deal. And Shopify, Microsoft, Salesforce, and Block all shipped agent infrastructure that rewrites how enterprises actually work. Let's get into it.

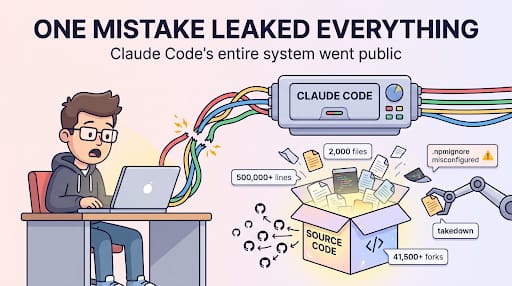

ANTHROPIC ACCIDENTALLY LEAKED CLAUDE CODE'S ENTIRE SOURCE CODE

What's Happening: Anthropic accidentally published the full source code of Claude Code, roughly 500,000 lines across 2,000 files to npm due to a misconfigured .npmignore file. The code was forked over 41,500 times before Anthropic could contain the leak.

Report Includes:

500K Lines Exposed: The leak included Claude Code's complete codebase not a subset, not a sanitized version. Every architectural decision Anthropic made for its flagship coding agent is now public.

41,500+ Forks in Hours: The speed of replication made containment effectively impossible. Competitors, researchers, and unknown actors all have permanent copies.

A Misconfigured File: The root cause was a single

.npmignoreerror that’s not a sophisticated attack, not an insider threat. One misconfigured exclusion rule handed every competitor a free engineering blueprint.

Why It Matters: This is the most significant accidental code leak in the AI industry's history. Claude Code is Anthropic's most strategic product - the thing that converts API access into daily developer habits. Every competitor now has a complete reference implementation of how Anthropic structures agentic coding workflows, tool routing, permission systems, and context management. The strategic damage extends well beyond the immediate security concerns.

GOOGLE DROPS GEMMA 4 - THE FIRST OPEN MODEL WITH NATIVE AGENTIC CAPABILITIES

What's Happening: Google released Gemma 4, the first open-weight model designed from the ground up with native agentic capabilities - including function calling, multi-step reasoning, and tool use that runs fully offline on mobile devices.

Report Includes:

Offline Agentic on Phone: Gemma 4 runs entirely on-device with no cloud dependency, bringing real agent capabilities, not just chat to mobile hardware for the first time in an open model.

Beats Models 20x Its Size: Benchmark results show Gemma 4 outperforming models with 20x more parameters on agentic task suites, suggesting architecture matters more than scale for tool-use workflows.

Fully Open Weights: Google released the model under open weights, meaning anyone can inspect, modify, and deploy it without API costs or vendor lock-in.

Why It Matters: The open-source AI ecosystem has been playing catch-up on agentic capabilities - most open models can chat but can't reliably execute multi-step tool workflows. Gemma 4 closes that gap and does it on-device, which means developers can build agents that run on user hardware without sending data to the cloud. This is a Category 5 event for the open-source agent ecosystem.

GOOGLE REDEFINES AI SAFETY EXPECTATIONS FOR THE AGENTIC ERA

What's Happening: Google published a sweeping update to its AI safety framework, arguing that the industry's safety expectations need to fundamentally shift now that AI models have become 300x more efficient than two years ago and have moved from prediction to action.

Report Includes:

300x Efficiency Gain: Google quantified the efficiency improvement frontier models have made over the past two years, arguing that safety frameworks built for slower, less capable models are now misaligned with reality.

From Prediction to Action: The core argument is that safety evaluation must change when models stop generating text and start executing actions calling functions, modifying files, triggering workflows in the real world.

Evolving Expectations: The post doesn't relax safety standards - it reframes them, proposing new evaluation criteria specific to agentic behavior rather than output quality.

Why It Matters: Every major AI lab is shipping agents. Almost nobody has published a coherent safety framework for what happens when those agents operate autonomously. Google's framing that the shift from "models that predict" to "models that act" requires entirely new safety paradigms is the most explicit statement on this problem from a frontier lab. Expect this to shape policy conversations for the rest of 2026.

SHOPIFY LAUNCHES TINKER , A ZERO-PROMPT AI AGENT THAT BUILDS YOUR BRAND IDENTITY

What's Happening: Shopify released Tinker, a free AI agent that generates complete brand visual identities logos, color palettes, typography, marketing assets — by chaining together 100+ tools from OpenAI, Google, and Anthropic without requiring a single prompt from the user.

Report Includes:

Zero-Prompt Design: Unlike typical AI design tools that require detailed prompting, Tinker autonomously decides what to generate based on minimal business inputs - name, industry, description.

100+ Tool Chain: Tinker orchestrates across image generation, layout, copywriting, and brand guideline tools from three different AI providers, outputting a cohesive brand package rather than isolated assets.

Free to Use: Shopify is offering Tinker at no cost, making it an on-ramp for merchants who haven't hired designers or used AI tools before.

Why It Matters: Tinker is a glimpse of what agentic workflows look like when they're productized for non-technical users. The multi-provider tool chaining is the technical detail that matters; it's not one model doing everything, it's an agent that knows when to call which tool from which provider. This is the UX model that enterprise agent products will be judged against: can a user get a complete outcome without knowing what tools are being used?

OPENAI ACQUIRES TBPN TO REWRITE THE ENTERPRISE COMMS PLAYBOOK

What’s Happening: OpenAI has made its first-ever media acquisition, buying TBPN (Technology Business Programming Network), a popular live talk show and "SportsCenter of tech." The move signals OpenAI’s intent to move beyond traditional PR and speak directly to the tech and political elite as it prepares for a massive IPO.

Report Includes:

The "Political Dark Arts" Integration: In a move that has raised industry eyebrows, TBPN will report directly to Chris Lehane, OpenAI’s chief political operative. This places a popular media outlet under the same leadership responsible for high-stakes lobbying and "dark arts" political strategy.

Beyond the Standard Playbook: OpenAI leadership noted that the "standard communications playbook" no longer applies to AGI labs. By owning a show with a cult following among Silicon Valley power players, OpenAI is securing a direct, unfiltered channel to its most important stakeholders: founders, investors, and policymakers.

A Self-Sustaining Media Empire: TBPN isn't just a marketing expense; it’s a business on track to generate $30M this year. This acquisition gives OpenAI a revenue-generating cultural engine that operates inside the very "safe spaces" where industry decisions are made.

Why It Matters: This acquisition represents a "Category 5" shift in how frontier labs manage public and political perception. By vertically integrating media, OpenAI isn't just building the technology, it is building the platform that explains, defends, and distributes the narrative of that technology. For competitors, the bar for "brand influence" just shifted from landing press hits to owning the network.

MICROSOFT QUIETLY TURNS EXCEL, TEAMS, AND SHAREPOINT INTO FULLY AGENTIC TOOLS

What's Happening: Microsoft updated its Copilot product line with persistent agent memory across Excel, Teams, and SharePoint - meaning Copilot now maintains context and continuity across Office applications rather than treating each interaction as isolated.

Report Includes:

Persistent Agent Memory: Copilot now remembers previous tasks, document contexts, and conversation history across sessions - a foundational requirement for agentic workflows that span days or weeks.

Cross-App Context: A task started in Teams can be continued in Excel with full awareness of the previous conversation, related documents, and pending actions.

Inside Every Office App: The integration isn't a new product ; it's embedded directly into the tools hundreds of millions of knowledge workers already use daily, which is the distribution advantage no AI startup can replicate.

Why It Matters: Microsoft's enterprise moat has always been that its tools are where work already happens. Adding persistent agent memory to that environment means agentic workflows don't require new interfaces, new tools, or new habits. You just keep using Excel and except now it remembers what you were doing last Tuesday and can pick up where you left off. This is how agents go mainstream: not as standalone products, but as invisible upgrades to existing workflows.

CLOUDFLARE ADDS AN LLM-POWERED AI AGENT TO CATCH MALICIOUS SCRIPTS

What's Happening: Cloudflare integrated an LLM-powered security agent into its client-side security platform that analyzes JavaScript in real time to detect malicious scripts and made it free for all users, scanning an estimated 3.5 billion scripts per day.

Report Includes:

LLM-as-Security Analyst: Rather than relying on static signature matching, the agent uses an LLM to understand script behavior and intent catching obfuscated or novel malicious patterns that rule-based systems miss.

3.5 Billion Scripts Daily: Cloudflare's scale means this agent processes more scripts in a day than most security tools process in a year, creating a feedback loop that improves detection over time.

Free for Everyone: Cloudflare made the feature available at no cost, expanding the addressable market beyond enterprise security teams to any developer running a website.

Why It Matters: This is one of the most concrete examples of LLMs being used for real-time decision-making at massive scale and not generating content, not answering questions, but making binary security judgments billions of times a day. If it holds up, it validates the thesis that LLMs can replace heuristic-based systems in production environments where latency and accuracy both matter. It also sets a new expectation: if Cloudflare gives this away for free, every security vendor needs an LLM-powered detection layer.

ANTHROPIC REVEALS THE REAL REASON CLAUDE CODE BEATS EVERY OTHER CODING AGENT

What's Happening: Anthropic published the engineering details behind Claude Code's architecture, revealing that its advantage isn't the underlying model and it's a 3-agent GAN-style loop consisting of a Planner, a Generator, and a skeptical Evaluator that continuously challenges the other two agents' outputs.

Report Includes:

Three-Agent Adversarial Loop: Claude Code doesn't use a single model pass. It runs Planner (decomposes the task), Generator (writes code), and Evaluator (critiques and rejects insufficient solutions) in a loop until quality thresholds are met.

Evaluator Is Deliberately Skeptical: The Evaluator agent is tuned to be harsher than a typical code reviewer, it rejects code that works but isn't robust, forcing the Generator to produce higher-quality outputs.

Infrastructure, Not Model Magic: Anthropic explicitly frames the advantage as architectural and the harness design makes Claude Code reliable over long sessions regardless of which Claude model is powering it.

Why It Matters: The entire coding agent market has been obsessed with model quality. Anthropic is saying the model matters less than the orchestration loop around it. If a 3-agent GAN pattern with a skeptical evaluator is what makes Claude Code reliable, then competitors can replicate the pattern with different models. This doesn't just explain Claude Code , it potentially levels the playing field for anyone willing to build equivalent harness architectures.

MISTRAL LAUNCHES FORGE : BUILD FULLY CUSTOM AI AGENT MODELS FROM SCRATCH

What's Happening: Mistral released Forge, a platform that lets organizations build fully custom AI agent models trained on their own proprietary data — without fine-tuning existing models or relying on RAG pipelines. The output is a bespoke model, not a wrapper.

Report Includes:

No Fine-Tuning, No RAG: Forge doesn't adapt an existing model or retrieve context at runtime. It trains a new model from scratch on your data, producing a model that has internalized your domain rather than looking it up.

Your Model, Your Rules: The resulting model is fully owned by the organization — no API dependency on Mistral, no usage-based pricing, no data leaving your environment during inference.

Built for Agent Workflows: The platform is specifically optimized for training models that execute multi-step agent tasks, not just generate text — a meaningful distinction from general-purpose model training platforms.

Why It Matters: The enterprise AI market has been stuck between two unsatisfying options: fine-tune a frontier model and stay locked to its API, or use RAG and accept latency and fragility. Forge proposes a third path — train a smaller, domain-specific model that genuinely knows your business. If the quality holds up, this undermines the fine-tuning-as-a-service market and gives enterprises a path to AI sovereignty that doesn't require massive internal ML teams.

IBM RELEASES GRANITE 4.0 VISION : A 3B MODEL THAT TURNS CHARTS AND TABLES INTO AGENT FOOD

What's Happening: IBM released Granite 4.0 Vision, a compact 3-billion-parameter model specifically designed to convert charts, tables, screenshots, and documents into structured data that other AI agents can reliably consume and act on.

Report Includes:

3B Parameters, Task-Specific: At 3 billion parameters, Granite 4.0 Vision is small enough to run cheaply at scale but purpose-built for document understanding and not general conversation.

Structured Output for Agents: The model's primary output format is structured data (JSON, tables, key-value pairs) rather than natural language summaries are explicitly designed as a preprocessing layer for downstream agents.

Charts and Tables as First-Class Inputs: Unlike vision models that handle images generically, Granite 4.0 Vision has specialized architectures for understanding axis labels, data points, table relationships, and spatial layout.

Why It Matters: Enterprise agents keep failing on document-heavy workflows because vision models return natural language descriptions of charts instead of structured data the agent can actually compute with. Granite 4.0 Vision is built as a bridge — it translates visual information into the machine-readable formats that agent reasoning loops need. At 3B parameters, it's cheap enough to run as a persistent preprocessing step in any agent pipeline. This is infrastructure, not a demo.

GOOGLE TURNS GMAIL INTO AN AI AGENT THAT READS, PRIORITIZES, AND ORGANIZES YOUR INBOX

What's Happening: Google updated Gmail with a Gemini 3-powered agent that actively reads your emails, builds to-do lists, identifies your VIP contacts, and reorders your inbox based on what it determines you should see first and not just chronologically.

Report Includes:

Proactive To-Do Extraction: The agent scans incoming emails and automatically surfaces action items, deadlines, and commitments as a to-do list — without the user setting up any rules.

VIP Identification: Over time, the agent learns which contacts consistently require your attention and prioritizes their emails in the inbox view.

Powered by Gemini 3: The underlying model runs Google's latest Gemini 3, which provides the multi-step reasoning required to understand email context, not just keyword matching.

Why It Matters: Gmail is the inbox for roughly 1.8 billion users. Turning it into an agent that makes decisions about what you see and when doesn't just change email - it normalizes the idea that AI should silently reorder your information environment based on inferred priorities. This is the consumer-facing vanguard of the same thesis Microsoft is pursuing with Copilot in Office: AI that doesn't wait for instructions but acts on your behalf within tools you already use.

CLOUDFLARE DYNAMIC WORKERS LET LLM-WRITTEN CODE EXECUTE 100X FASTER

What's Happening: Cloudflare expanded on its Dynamic Workers infrastructure, explicitly positioning it as the execution layer for AI-generated code — V8 isolates that spin up 100x faster and use 10–100x less memory than traditional containers.

Report Includes:

Millisecond Startup: V8 isolates start in single-digit milliseconds compared to seconds for container-based execution — the difference between real-time agent workflows and batch processing.

10–100x Memory Efficiency: The isolation model means you can run far more concurrent code executions per server, making it economically viable to spin up and discard generated code rapidly.

Designed for Generated Code: Cloudflare explicitly architected Dynamic Workers around the assumption that code is being written by an LLM, not a human — optimizing for ephemeral, disposable execution rather than long-running services.

Why It Matters: The execution environment is the hidden bottleneck in agentic workflows. LLMs can generate code in seconds, but spinning up a container to run that code takes longer than the generation itself. Cloudflare is building the default runtime layer for the agent ecosystem — and if developers standardize on V8 isolates, it creates a powerful platform lock-in that's invisible to the end user but structurally essential to every agent framework built on top.

SALESFORCE MAKES SLACKBOT THE ORCHESTRATION LAYER FOR ALL YOUR OTHER AGENTS

What's Happening: Salesforce transformed Slackbot into an agent orchestration hub, a single MCP (Model Context Protocol) client that routes work across 6,000+ Slack integrations, controlling multiple AI agents from different vendors through one conversational interface.

Report Includes:

One Bot to Rule Them All: Instead of each AI agent living in its own Slack channel or integration, Slackbot becomes the universal dispatcher and you talk to it, and it delegates to whichever agent is appropriate.

6,000+ App Reach: Through existing Slack integrations, the orchestration layer can reach across an organization's entire tool stack like Jira, Salesforce, Google Workspace, GitHub, and thousands more.

MCP-Native: The implementation uses the Model Context Protocol, meaning any MCP-compatible agent can plug into the orchestration layer without custom integration work.

Why It Matters: The multi-agent problem isn't building agents - it's coordinating them. Every enterprise is going to end up with agents from different vendors (OpenAI, Anthropic, Microsoft, homegrown) that need to hand off work to each other. Salesforce is betting Slack, already the communications hub for most tech companies, will become the orchestration hub as well. If successful, Slack's strategic value will shift from "messaging app" to "agent operating system."

BLOCK REPLACED ITS ORG CHART WITH AN AI WORLD MODEL

What's Happening: Block (Jack Dorsey's company) published details of an internal AI system that replaced its traditional org chart with a continuously updated "world model" , an AI that tracks what's being built, what's blocked, where resources sit, and how teams actually interact, independent of the formal reporting structure.

Report Includes:

Living Org Graph: The AI maintains a real-time map of the organization that reflects actual work patterns and dependencies, not the formal hierarchy on paper.

Tracks Blockers and Resources: The model identifies where work is stalled, where capacity exists, and where dependencies create bottlenecks — information that typically lives in scattered project management tools and managers' heads.

From Hierarchy to Intelligence: Block's framing is explicit: the org chart is an outdated abstraction, and an AI that models the real system is more useful than a document that describes the nominal one.

Why It Matters: Every large company has a formal org chart and an informal one. The informal one is what actually determines how work gets done. Block is using AI to make the informal org visible and queryable. If this approach scales, it changes how organizations are managed — not by restructuring teams, but by giving leadership an AI-mediated view of how the organization actually functions. This is one of the boldest enterprise AI bets of 2026.

CLAUDE NOW WORKS ACROSS EXCEL AND POWERPOINT WITH FULL SHARED CONTEXT

What's Happening: Anthropic extended Claude's enterprise capabilities to work simultaneously across Excel and PowerPoint with a single shared context window — available through AWS Bedrock, Google Vertex AI, and Microsoft Azure AI Foundry.

Report Includes:

Cross-Document Context: Claude can now reference data in a spreadsheet while generating a presentation about that data, maintaining a single reasoning thread across both file types.

Multi-Cloud Availability: The feature launched simultaneously on Bedrock, Vertex AI, and Microsoft Foundry , a deliberate multi-cloud strategy that avoids Anthropic locking itself into a single provider.

Enterprise-Grade Deployment: The integration is designed for enterprise IT environments, with the governance, access controls, and compliance features those organizations require.

Why It Matters: The gap between AI demos and enterprise AI adoption has been context fragmentation, your data lives in spreadsheets, your presentations live in slide decks, and no AI could work across both without losing context. Claude's cross-document capability directly attacks this problem. The multi-cloud launch strategy also signals that Anthropic is unwilling to cede enterprise distribution to any single cloud provider, which keeps its negotiating position strong.

APPLE IS ENDING CHATGPT'S EXCLUSIVE SIRI DEAL MAKING WAY FOR CLAUDE AND GEMINI

What's Happening: Apple is preparing to end ChatGPT's exclusive integration with Siri, replacing it with a new Extensions system in iOS 27 that will allow Claude, Gemini, and other AI assistants to plug directly into Siri's interface. The announcement is expected at WWDC on June 8, 2026.

Report Includes:

Extensions System for Siri: Instead of Siri routing to a single AI provider, iOS 27 will introduce an extensibility framework that lets users choose which AI assistant powers different Siri capabilities.

Claude and Gemini Coming: Anthropic's Claude and Google's Gemini are expected to be available as Siri extensions at or shortly after launch, breaking OpenAI's exclusive hold.

WWDC June 8: The formal announcement is expected at Apple's annual developer conference, with developer documentation likely available immediately.

Why It Matters: When Apple first partnered with OpenAI for Siri, it looked like a coup for OpenAI. Ending the exclusivity less than a year later signals that Apple wants Siri to be an agentic platform, not a ChatGPT reseller. For Anthropic and Google, getting into Siri means access to Apple's installed base of 1.5 billion+ active iPhones — a distribution channel neither can build on their own. For OpenAI, it means losing the most valuable consumer AI distribution deal in the world. This reshuffles the entire consumer AI competitive landscape.

Thanks for reading.

See you next week with more AI agent updates

— Rakesh’s Newsletter