Hi everyone 👋

Welcome back to AI Agent Weekly. This week, Google attacked the biggest cost bottleneck in LLM inference. Anthropic shipped three separate Claude upgrades. OpenAI quietly killed its video platform. And a 46-minute supply chain attack turned one of the most popular AI gateway libraries into a credential stealer. Let's get into it.

GOOGLE SHRINKS LLM MEMORY BY 6X WITH TURBOQUANT

What's Happening: Google Research published TurboQuant, a quantization algorithm that compresses the key-value (KV) cache of large language models down to as little as 3 bits, directly attacking the GPU memory bottleneck that makes long-context inference so expensive.

Report Includes:

6x Memory Reduction: Compressing KV cache to 3 bits cuts memory use by at least 6x while preserving benchmark performance, making long-context models dramatically cheaper to serve.

8x Attention Speedup: On NVIDIA H100s, 4-bit TurboQuant delivers up to 8x faster attention logit computation compared to standard 32-bit keys.

Targeting the Real Bottleneck: Most optimization work focuses on model weights; TurboQuant goes after the KV cache, which is what actually explodes during long conversations and document processing.

Why It Matters: The KV cache has been the silent tax on every long-context deployment. A 6x memory reduction at this fidelity means you can fit more concurrent sessions per GPU, run longer contexts without memory errors, and drop serving costs meaningfully. For anyone building on Claude, GPT-4, or Gemini with long context windows, TurboQuant's approach is the kind of infrastructure paper that quietly reshapes what's economically viable.

GOOGLE'S GEMINI 3.1 FLASH LIVE MAKES VOICE AI FEEL HUMAN

What's Happening: Google released Gemini 3.1 Flash Live, a real-time audio model designed to make voice AI feel less robotic in live, complex conversations — with benchmark-leading results on multi-step function calling.

Report Includes:

90.8% on ComplexFuncBench Audio: Multi-step function calling under constraints, a task that previous models fumbled badly, now hits 90.8% accuracy — noticeably ahead of prior versions.

Leading Scale AI's Audio MultiChallenge: With "thinking" mode enabled, it tops the leaderboard at 36.1%.

Human-Speed Responses: Latency is low enough that the model doesn't feel like waiting on a bot — which has been the core UX failure of voice AI since the category began.

Why It Matters: The gap between voice AI demos and production voice AI has always been latency plus reasoning quality. Gemini 3.1 Flash Live attacks both simultaneously. The function-calling benchmark scores matter especially for anyone building voice agents that need to do actual work — booking, querying, triggering actions — not just answering conversational questions.

GOOGLE LYRIA 3 PRO CAN NOW GENERATE FULL 3-MINUTE SONGS

What's Happening: Google upgraded its AI music model to Lyria 3 Pro, which can now generate fully structured songs up to three minutes long intros, verses, choruses, bridges rather than just short looping clips.

Report Includes:

Full Track Structure: Unlike the previous version (which was best for hooks and ideas), Lyria 3 Pro understands song architecture and can build a complete, structured track from a single prompt.

Up to 3 Minutes: The length ceiling is now enough for a real, releasable track, not just a demo snippet.

Integrated Across Google Products: Rolling out to YouTube, Google AI Studio, and other surfaces rather than as a standalone tool.

Why It Matters: The jump from 30-second loops to structured 3-minute tracks is what takes AI music from "interesting toy" to "usable production tool." This is Google's push to compete with Suno and Udio in the creator-facing AI music space. The distribution through existing Google products gives it immediate reach that standalone competitors can't match.

LITELLM GOT BACKDOORED IN A 46-MINUTE PYPI SUPPLY CHAIN ATTACK

What's Happening: On March 24, attackers pushed two malicious LiteLLM releases to PyPI (versions 1.82.7 and 1.82.8), injecting credential-stealing code into one of the most widely used AI gateway libraries. The packages were live for approximately 46 minutes before PyPI quarantined the project.

Report Includes:

Credential Exfiltration Payload: The malicious versions injected code into

proxy_server.pyand used.pth-style auto-loading to hunt for API keys and secrets on install or import — silently, without user interaction.46-Minute Exposure Window: Fast containment, but any developer who

pipinstalled LiteLLM during that window should audit their API keys immediately.Active Investigation: LiteLLM's CEO and CTO published a security advisory on March 24, updated March 25 with community-contributed scanning scripts for GitHub Actions and GitLab CI pipelines.

Why It Matters: LiteLLM sits in the middle of a huge number of AI infrastructure stacks, it's the routing layer between applications and LLM APIs. A compromised version doesn't just steal secrets from the machine it runs on; it potentially reaches across every API key that application uses. This is a textbook supply chain attack hitting an extremely high-value target. If you're running LiteLLM in production, verify your version and rotate credentials regardless.

ANTHROPIC PUBLISHES A BLUEPRINT FOR KEEPING CLAUDE FOCUSED IN LONG CODING SESSIONS

What's Happening: Anthropic's engineering team published a detailed harness design for long-running autonomous coding workflows, essentially a framework for turning Claude into a reliable multi-hour engineering system rather than a one-shot autocomplete tool.

Report Includes:

State Externalized as Artifacts: Feature lists, progress logs, repo structure, and test scaffolding all get written to external files so Claude can resume work without losing context between sessions.

Restartable and Auditable: The design explicitly models Claude's workflow like a real engineering project; every step is logged, every decision is traceable, and failures don't wipe out hours of progress.

Practical Architecture Guidance: Written by Prithvi Rajasekaran from Anthropic's Labs team, the post goes into implementation specifics rather than staying high-level.

Why It Matters: The biggest unsolved problem in agentic coding is reliability over long sessions. Claude (and every frontier model) loses coherence when tasks stretch past a certain complexity threshold. Anthropic's harness design is the first official blueprint for working around that limitation at an infrastructure level. Engineering teams running Claude Code for multi-step projects should read this before architecting their own approaches.

CLAUDE CAN NOW CONTROL YOUR DESKTOP FROM YOUR PHONE

What's Happening: Anthropic launched Cowork Dispatch, a mobile control layer for its Claude Cowork desktop agent. Text Claude from your phone, and it executes tasks like file edits, browser sessions, report generation on your powered-on desktop computer while you're away from it.

Report Includes:

Outcome-Based Delegation: You specify what you want done, not how to do it. Claude uses your desktop's full capabilities to execute.

Single Persistent Thread: One conversation context spans both your phone and desktop no re-explaining, no session restarts when you return to your machine.

Full Cowork Toolkit on Mobile: Files, browser, email, and calendar are all accessible through the mobile interface.

Why It Matters: This is the async AI agent model that knowledge workers have been waiting for. Assigning a multi-hour task from your phone and returning to finished results changes how you think about delegation. It's less about having AI help you do things, and more about AI doing things while you're doing something else. The persistent context thread is the key technical detail — without that, it's just a remote trigger.

CLAUDE CODE'S AUTO MODE LETS AI DRIVE YOUR CODING SESSION WITHOUT ASKING PERMISSION FOR EVERY STEP

What's Happening: Anthropic released Auto Mode for Claude Code , a middle-ground permission setting that lets the model execute multi-step coding tasks autonomously without requiring confirmation for each action, while routing every tool call through a risk classifier before it runs.

Report Includes:

Risk Classifier on Every Action: File writes, shell commands, and network actions each pass through a safety layer before execution. Low-risk actions proceed automatically; high-risk ones still surface for confirmation.

Middle Setting Between Two Extremes: Auto Mode sits between "ask permission for everything" and "no guardrails" , the right default for most engineering workflows.

Visible in Claude Code v2.0.76: The terminal now displays when Auto Mode is active, giving developers a clear signal of what operating mode they're in.

Why It Matters: The confirm-every-action workflow broke the value proposition of agentic coding. Developers kept getting interrupted for trivially safe operations. Auto Mode's risk classifier approach is how you make autonomous coding usable without just turning off all safety checks. This is the friction reduction that was missing from Claude Code's earlier releases.

OPENAI SHUTS DOWN SORA

What's Happening: OpenAI is discontinuing its Sora AI video generation platform, citing compute costs, content safety concerns, and insufficient ROI. The platform is being shut down rather than iterated on.

Report Includes:

Compute Economics Didn't Work: High-quality video generation burns orders of magnitude more GPU time than text or image generation, and the business case never materialized to justify it.

Content Risk Exposure: Sora's photorealistic output created serious non-consensual content risks, and Hollywood rights holders pushed back as users generated unauthorized content using recognizable IP and likenesses.

Not a Pivot — A Discontinuation: This isn't a rebrand or restructuring. Sora is being shut down.

Why It Matters: Sora launched to enormous hype in early 2024. Shutting it down two years later is an admission that AI video generation at the frontier requires safety and business model solutions that don't exist yet. This gives Runway, Kling, and Veo clear air in the enterprise video space. It also signals that OpenAI is making harder resource allocation decisions not everything gets kept alive on brand value alone.

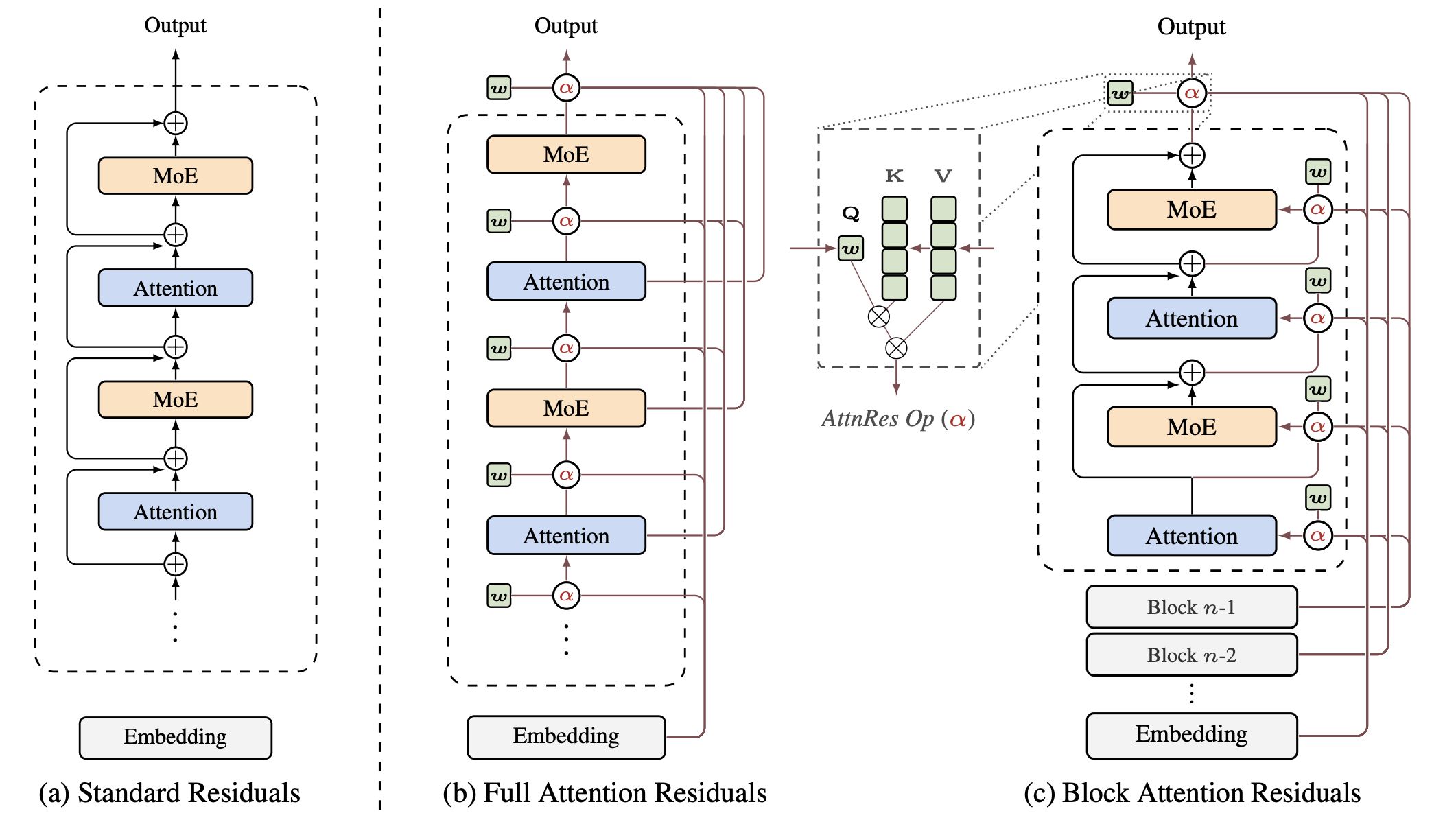

MOONSHOT AI'S ATTENTION RESIDUALS LET TRANSFORMERS SELECTIVELY RECALL EARLIER LAYERS

What's Happening: Moonshot AI (the Kimi team) published a technical paper introducing Attention Residuals, a new architecture that replaces standard residual connections in transformers with a softmax attention mechanism over preceding layer outputs letting each layer selectively pull the features it needs from its entire processing history.

Report Includes:

The Problem with Standard Residuals: In normal transformers, each layer's output gets summed into a single residual stream with uniform weights. As depth increases, early-layer representations get diluted and buried.

Attention Over Depth: AttnRes replaces fixed summation with learned, input-dependent weighting each layer queries its full layer history and retrieves what's actually relevant.

Open Source: Code available at github.com/MoonshotAI/Attention-Residuals.

Why It Matters: Residual connections have been a foundational design choice in transformers since the architecture's introduction. Attention Residuals is a direct challenge to that assumption and the framing that early-layer information degrades with depth is a real, documented problem. This is the kind of architectural paper that quietly influences the next generation of model designs. Worth reading if you're tracking frontier model architecture research.

BRAVE IS BACKING A BID TO MAKE .AGENT A REAL INTERNET DOMAIN

What's Happening: A community of 3,000+ members , 700+ companies and 2,300+ developers is applying through ICANN to establish .agent as an official top-level domain, backed by Brave. The goal is a community-governed namespace for AI agents and agentic applications, structured so no single company can lock it down.

Report Includes:

Community-Run Governance: Rather than having

.agentcontrolled by a single entity (a Google or OpenAI equivalent), the application proposes collective governance to prevent extractive pricing or gatekeeping.DNS Layer for the Agentic Web: Proponents see

.agentas the naming infrastructure for AI agents — human-readable, consistent identifiers analogous to what.comdid for websites.Brave as a Credible Backer: Brave's involvement gives the bid more institutional weight than a typical community application.

Why It Matters: If AI agents become persistent, addressable entities that operate across services and communicate with each other, they'll need stable identifiers. .agent is a bet that this infrastructure needs to be built now, before one company controls it. Whether ICANN approves it or not, the conversation it's forcing about namespace governance for agentic systems is worth having.

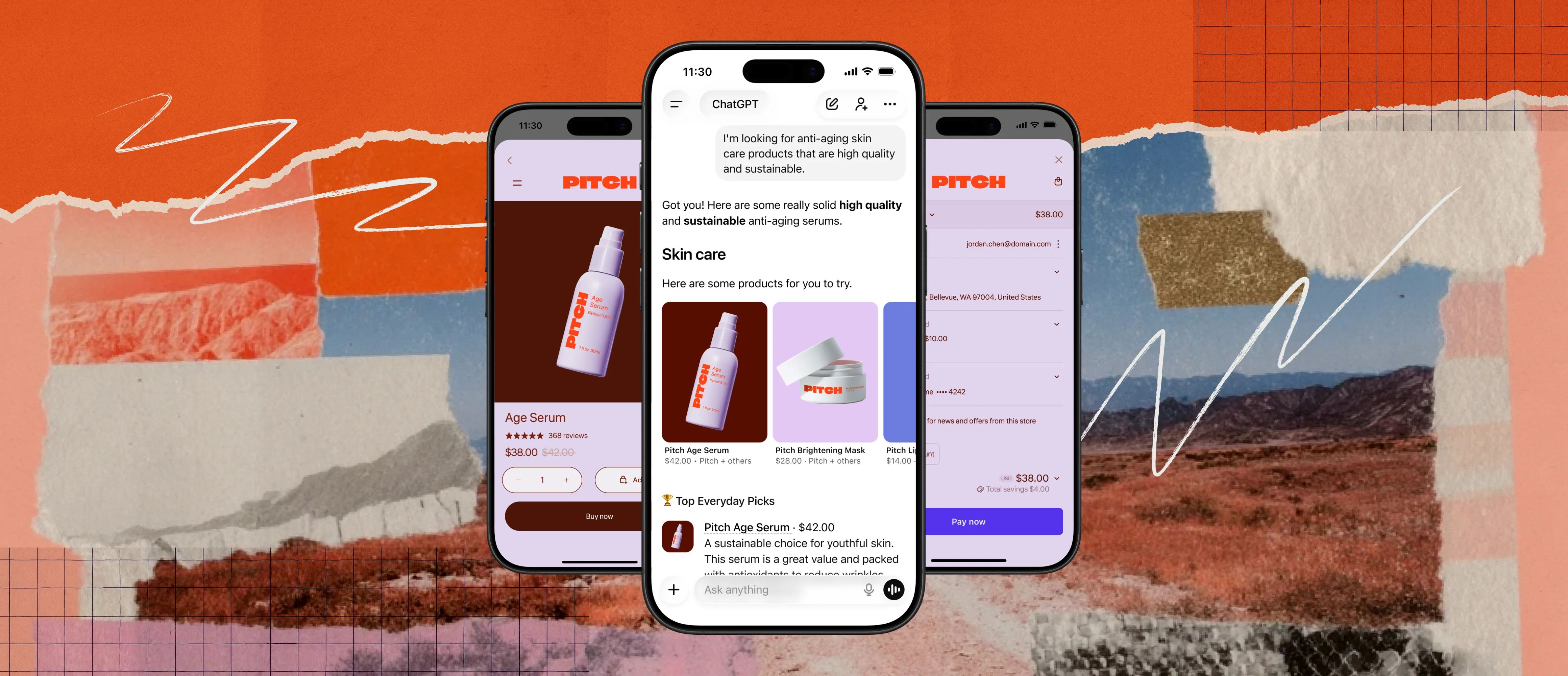

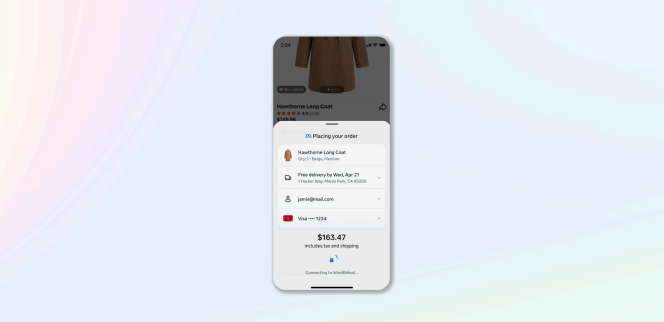

SHOPIFY PRODUCTS ARE NOW PURCHASABLE DIRECTLY INSIDE CHATGPT

What's Happening: Shopify has enabled product discovery and purchase completion inside ChatGPT conversations, compressing the e-commerce funnel from "browse then click away" to "discover and buy in one session" — currently available for US users.

Report Includes:

End-to-End in One Conversation: Previously, AI chat was top-of-funnel only. Users asked for recommendations, then left to browse the actual store. Now the purchase happens without leaving ChatGPT.

Opens the Door for Small Merchants: Millions of Shopify stores, including small DTC brands, now have a shot at appearing in ChatGPT product recommendations alongside larger retailers.

Direct Counter to OpenAI's Instant Checkout Failure: Shopify's native integration approach sidesteps the trust and reliability issues that killed OpenAI's own Instant Checkout feature.

Why It Matters: This is a genuine second attempt at AI-native commerce after OpenAI's first attempt failed. The difference is infrastructure: Shopify's merchant relationships and checkout reliability bring something ChatGPT couldn't build on its own. If conversion rates hold up, it puts significant pressure on Google Shopping and traditional search-driven e-commerce discovery.

CLOUDFLARE DYNAMIC WORKERS RUN AI-GENERATED CODE 100X FASTER WITHOUT CONTAINERS

What's Happening: Cloudflare launched Dynamic Workers, a sandbox infrastructure that spins up V8 isolates on the fly to execute AI-generated code — 100x faster startup and up to 100x more memory-efficient than traditional containers.

Report Includes:

V8 Isolates, Not Containers: The performance gains come from running V8 isolates instead of full container images , the same isolation approach Chrome uses per browser tab, applied to server-side code execution.

"Code Mode" Architecture Pattern: Cloudflare is explicitly promoting a design where LLMs write code against APIs rather than making sequential tool calls , a pattern they argue is faster and more reliable for agentic workflows.

Built for AI-Generated Code at Scale: The infrastructure is designed with the assumption that code is being generated, not hand-written, and needs to be executed and discarded rapidly.

Why It Matters: The bottleneck in agentic workflows is often not the LLM — it's the execution environment. Spinning up a container to run a small generated script takes seconds. V8 isolates take milliseconds. At scale, that difference determines whether agentic workflows feel real-time or feel like a batch process. Cloudflare is positioning this as the default execution layer for the next generation of AI tooling.

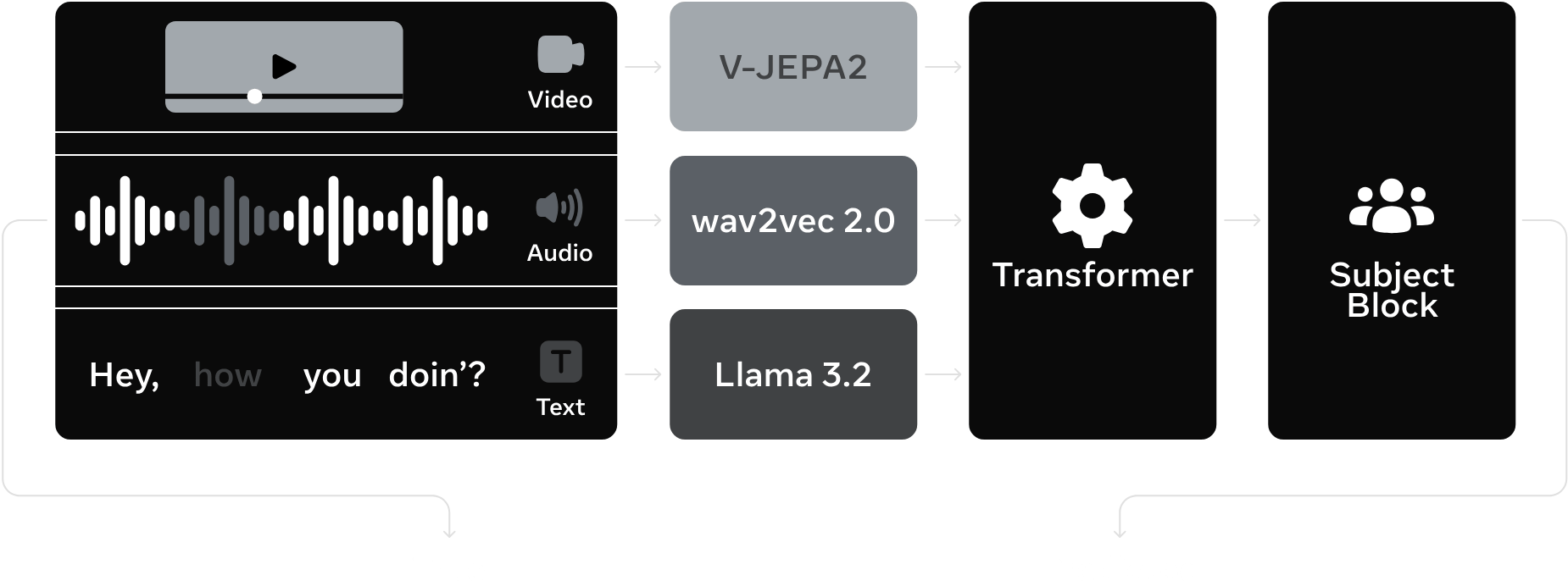

META'S TRIBE V2 PREDICTS BRAIN ACTIVITY ACROSS 1,000 CORTICAL REGIONS FROM VIDEO

What's Happening: Meta released TRIBE V2, a brain-AI model that predicts how your brain responds while watching video by simultaneously processing visual, audio, and language signals — the first multimodal approach to fusing all three streams for neural activity prediction.

Report Includes:

Three Streams, One Model: Most prior brain-AI work used image-only or text-only inputs. TRIBE fuses video (V-JEPA2), audio (wav2vec 2.0), and language (Llama 3.2) simultaneously through a transformer and subject-specific block.

1,000+ Cortical Parcels: The model predicts activity across approximately 1,000 brain regions with mean correlations above 0.2 and noise-normalized scores above 0.5 on standard benchmarks.

Trained on ~80 Hours of Video: The training set used people watching real TV and movies — naturalistic stimuli rather than controlled lab conditions.

Why It Matters: Predicting brain responses to naturalistic media has applications in neuroscience, content recommendation, and brain-computer interface development. The multimodal fusion approach also demonstrates that brain activity during rich media consumption is not explainable from any single sensory channel alone. TRIBE V2 is a research model, not a product — but the methodology is advancing quickly.

ARC-AGI-3 LAUNCHES AN INTERACTIVE REASONING BENCHMARK TO MEASURE HOW CLOSE AI IS TO AGI

What's Happening: ARC Prize released ARC-AGI-3, a new benchmark version that moves from static pattern tasks to interactive multi-step games — requiring models to choose sequences of actions rather than produce single-shot outputs, and deliberately designed to resist brute-force scaling.

Report Includes:

Interactive, Not Static: ARC-AGI-3 tasks require models to take actions, observe results, and adapt — much closer to real-world reasoning than one-shot puzzle completion.

Scaling-Resistant Design: Tasks are deliberately novel and unlike training data, so raw compute and memorization provide minimal advantage.

Open to Both Humans and AI: The leaderboard tracks both human and AI performance, providing a grounded baseline for what human-like reasoning actually looks like on these tasks.

Why It Matters: Every benchmark eventually gets saturated by scale. ARC-AGI-3's interactive design makes saturation harder and the scores more meaningful. If you're tracking the gap between current AI capabilities and genuine general reasoning, this is the benchmark to watch in 2026.

STRIPE ENABLES ONE-TAP CHECKOUT INSIDE FACEBOOK ADS

What's Happening: Stripe launched a native checkout integration for Facebook that turns ads into direct purchase surfaces — using saved Meta wallet details to complete orders without leaving the feed, no redirects, no re-entering payment information.

Report Includes:

Zero-Redirect Purchase Flow: Users tap "Buy now" on an ad and the order completes inside Facebook using their saved Meta wallet. The merchant site never enters the flow.

Ad as the Purchase Surface: Discovery and transaction happen in the same moment, collapsing the conversion funnel to its minimum length.

Powered by Stripe's Reliability: Stripe's payment infrastructure handles the transaction, which addresses the merchant trust issue that has plagued social commerce integrations.

Why It Matters: Social commerce has promised this for years and repeatedly underdelivered on conversion. The Stripe-Meta combination is the most credible infrastructure play yet: Stripe brings payment trust, Meta brings consumer habits and saved wallet data. For direct-to-consumer brands, this changes the math on Facebook ad ROI significantly if it converts at even close to website-native rates.

CLINE KANBAN LETS YOU ORCHESTRATE MULTIPLE AI CODING AGENTS FROM ONE BOARD

What's Happening: Cline released Cline Kanban, a local command center for coordinating multiple AI coding agents — Claude Code, Codex, Cline, and others through a single kanban-style interface rather than juggling separate terminals and sessions.

Report Includes:

Single UI Across Multiple Agents: Create, triage, and chain tasks across different AI coding agents without context-switching between tools.

Git-Integrated Task Cards: Spin it up from any repo via CLI, describe work in a sidebar chat, and it auto-creates task cards tied to real code changes in that repository.

Local-First: Runs locally, not as a cloud service, which matters for teams with IP and security constraints.

Why It Matters: Multi-agent coding workflows are becoming real — different models have different cost/performance tradeoffs for different task types. The bottleneck isn't the models; it's the coordination overhead. Cline Kanban is an early attempt at solving that orchestration layer without requiring teams to build bespoke internal tooling. Worth watching as the multi-agent coding space matures.

Thanks for reading.

See you next week with more AI agent updates.

— Rakesh's Newsletter