The standard answer is "it depends."

That's true. But it's useless on its own. This piece breaks down what it actually depends on, specific to your company, so you can figure that out before spending months building something that won't survive its first compliance review.

Why Pattern Selection Is an Enterprise Problem, Not a Technical One

Here's what happens almost every time an enterprise team builds its first multi-agent system: they pick the same pattern. One head agent, a few workers, everything reports upward.

That's not a design decision. That's copying what looks familiar.

And at the enterprise level, the cost of getting this wrong isn't just a refactor. It's a botched release. It's six months spent building coordination logic that nobody can inspect. It's your compliance team blocking production because no one can explain why the system got the wrong answer. It's a 2 AM production failure because the pattern your developers picked can't handle real transaction volume.

Anthropic published five multi-agent models earlier this week: Generator-verifier, Orchestrator-subagent, Agent teams, Message bus, and Shared state. These aren't ranked from simple to advanced. They're different tools for different situations. Each one comes with real tradeoffs.

Choosing the right pattern means weighing those tradeoffs against what your organization actually needs. The pattern is one input. Your governance requirements, your existing infrastructure, how mature your team is, how much downtime you can tolerate: those are the rest.

Most organizations learn this by picking the wrong one first.

The Five Patterns, Adapted to Enterprise Contexts

The following are the adaptations of each pattern in moving from developer-centric examples to enterprise contexts.

Generator-Verifier

An agent generates an output. Another agent assesses if the output isn't good enough, the second agent sends back specific feedback, and the first agent tries again. This loop runs until the output passes or hits a retry limit.

Enterprise example: Contract review. A generation agent drafts contract language based on deal terms. A verification agent checks every clause against legal requirements, regulatory rules, and the company's approved language. When something fails, the generation agent gets told exactly what went wrong: the liability cap doesn't match that region's rules, or the contract is missing GDPR language for an EU partner.

Why it’s relevant for enterprises: Every check gets logged. Every rejection comes with a reason attached. The audit trail is inherent to the process. It’s one of the most audit-friendly patterns around.

Orchestrator/Sub-Agent

An orchestrator divides an assignment into parts, assigns them to specialized sub-agents, and integrates the outputs generated by these agents.

Example from the enterprise world: Quarter-end financial close. The orchestrator manages the full close process. It assigns sub-agents to handle accounts receivable reconciliation, intercompany eliminations, variance analysis, and management reporting. Each sub-agent gets access to the specific databases it needs and works its piece independently.

Where enterprises care: This pattern is easy to explain. Leadership gets it immediately because it mirrors how most teams already work: one manager, several specialists, clear delegation. But it breaks down when those sub-agents need to share information with each other mid-process. And in a financial close, that happens constantly. AR reconciliation affects intercompany eliminations. Variance analysis depends on numbers that are still being finalized two steps over. The orchestrator becomes a bottleneck when every cross-process update has to route through it.

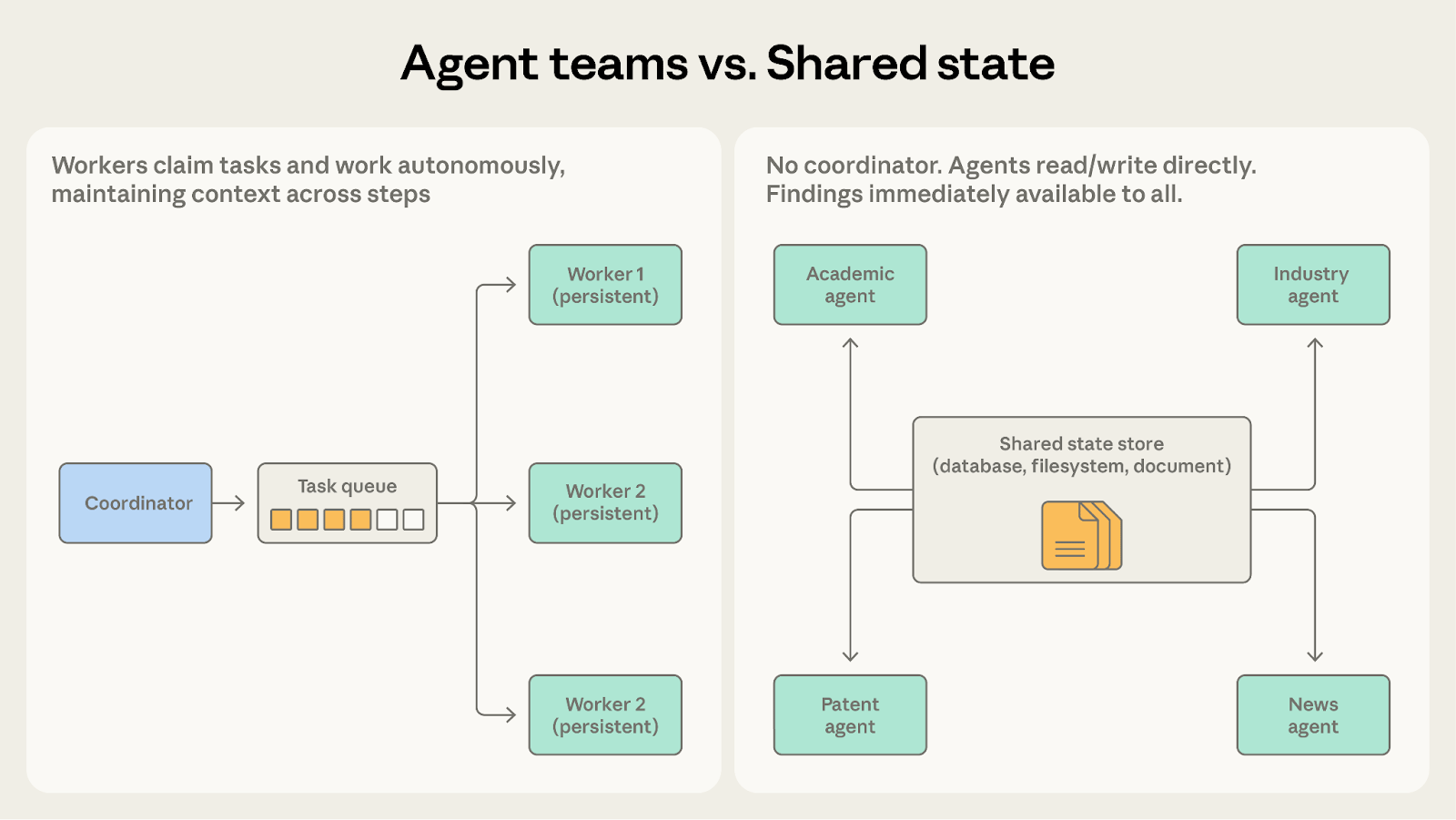

Teams of Agents

Multiple agents run at the same time, each one focused on its own area. They stay active long enough to build up real knowledge of their domain. A coordinator hands out work and collects results, but stays out of the way while the agents do their jobs.

Enterprise example: SGlobal supply chain management. You assign one agent per region: Americas, EMEA, Asia-Pacific. Each agent learns its territory over time. It tracks local suppliers, shipping delays, currency risks, and regional regulations. These agents run in parallel, each managing its own supply chain and flagging problems to the coordinator as they come up. Because they persist across tasks, they get better the longer they run.

Why enterprises care: The accumulated knowledge pays off. An agent that already knows which APAC suppliers tend to delay shipments in monsoon season will catch problems faster than one starting from scratch every time. Error rates drop on repeated tasks because the agent isn't relearning context it already has.

The catch: these agents often need to touch shared systems, like a common inventory database or a centralized accounting platform. If you don't build clear boundaries around who can read and write what, two regional agents can update the same record and produce conflicting numbers. The coordination problem isn't between the agents themselves. It's about controlling access to the systems they share.

Message Bus

Agents don't talk to each other directly. They communicate through a shared event layer. Each agent listens for the events it cares about and ignores everything else. You can add new agents without changing any of the existing ones.

Enterprise example: Compliance monitoring. Regulatory alerts flow in from news feeds, regulatory body RSS feeds, and legal databases. From there, a chain of agents picks them up. A classification agent sorts each alert by type and jurisdiction. A policy impact agent figures out which internal policies are affected. A remediation agent drafts the needed policy changes. A sign-off agent routes the whole package to the right human reviewer. When a new regulation category shows up or you expand into a new jurisdiction, you just add another agent. Nothing else in the pipeline needs to change.

Why enterprises care: This pattern scales sideways. New compliance mandates, new business lines, new data sources: each one is just another agent plugged into the bus. The existing pipeline keeps running untouched.

The tradeoff is visibility. When an alert slips through the cracks, you need to trace exactly where it stalled and why. That means comprehensive logging from day one, not added later. If you don't build observability into the bus at deployment, debugging a missed alert across six agents becomes difficult.

Every agent reads from and writes to the same workspace. There's no coordinator directing traffic. Agents see what other agents have found and build on it directly.

Enterprise example: Financial audit prep across multiple teams. An agent handling revenue recognition writes its findings to a shared audit workspace. An agent reviewing related-party transactions picks up those findings right away and adjusts what it looks at next. A third agent analyzing management estimates reads both sets of results and spots where the assumptions don't line up. Everyone's work is visible to everyone else in real time, so the audit comes together as a whole instead of getting stitched together at the end..

Enterprise relevance of this approach: This is the fastest path to genuine collaboration between agents. No coordinator sitting in the middle, relaying messages back and forth, slowing things down. Agents react to each other's work directly, which produces a more complete picture than passing results through a chain.

The risk is that nobody is in charge. System behavior comes from whatever the agents happen to do, not from a plan someone designed. If two agents update conflicting assumptions at the same time, there's no coordinator to catch it. You need to define clear rules before deployment: when does work stop, how do agents avoid overwriting each other, what happens when findings contradict. If those rules aren't locked down up front, the same flexibility that makes this pattern powerful will produce unpredictable results under load.

The Enterprise Variables That Determine Which Pattern Is the Solution

The five patterns form the baseline. Four enterprise-specific factors will determine the appropriate choice.

Variable 1 : Governance and Auditability Requirements

Some patterns create different audit trails. In regulated industries, this isn't a nice-to-have. It's a gate.

Generator-verifier gives you the strongest audit trail out of the box. Every generation attempt, every verification check, every rejection with its reasoning, and the final accepted output. It's all there without extra work.

Orchestrator-subagent gives you a clean decision path. The orchestrator sent task X to subagent Y, which produced result Z, and the orchestrator combined it into final output W. Easy to trace, easy to explain.

Message bus requires you to earn your audit trail. Events flowing through a bus can be dropped or misrouted without triggering any errors. If your regulators need you to explain every decision in a process, your logging infrastructure has to be airtight before you go to production. Not after.

Shared state is the hardest to audit. Multiple agents reading and writing to the same store at the same time means you need timestamps and version tracking on every update just to reconstruct what happened in what order. Teams that skip this step early will have a bad time explaining outputs to auditors later.

The question to ask before choosing a pattern: based on your regulatory requirements, what sequence of events do you need to be able to reconstruct?

Variable 2: Tolerance of Partial Failures versus Hard Failures

Every pattern fails differently. Matching your pattern to your organization's failure tolerance isn't optional.

Generator-verifier fails cleanly. If the generator can't satisfy the verifier after a set number of attempts, the system stops. No partial output. Nothing slips through. This works when a wrong answer is worse than no answer at all.

Orchestrator-subagent produces partial results. If three out of five subagents succeed and two fail, the orchestrator can assemble what it has and flag what's missing. That's useful when partial output still has value, like a market analysis covering four out of five regions.

Message bus fails silently by default. An event that doesn't get processed won't crash the system. It just disappears. For most workflows, that's fine. For compliance notifications or fraud alerts, it's dangerous. Silent failures are hard to notice without monitoring, and most organizations don't invest enough in monitoring until something goes wrong.

Shared state can return inconsistent results. If two agents write conflicting data and there's no conflict resolution logic in place, the system won't flag it. It will just serve up whichever write came last. For most enterprise use cases, that means you need versioning and merge rules built into the store from the start.

The question to answer: when your system produces a wrong output, what do you need it to do? Stop entirely? Escalate? Deliver the best partial answer it can? Your answer rules out at least one pattern.

Variable 3: Pre-existing Integration Surface

Your existing systems are already biased toward certain patterns. Fighting that bias is expensive.

If your company runs event-driven infrastructure (Kafka, EventBridge, Azure Service Bus), the message bus pattern will feel natural. Your operations team already knows how to manage event flows. You're not adding a new surface to maintain.

If your organization runs on batch processing (nightly data loads, scheduled reconciliations, weekly reports), orchestrator-subagent or agent teams will be a cleaner fit. These patterns work well when the job is about scheduling and coordinating handoffs, not reacting to real-time events.

Shared state needs every agent to read and write from a common store. If your organization has clear data access controls, implementation is straightforward. If your data ecosystem is fragmented and nobody is sure who can access what, you have a data governance problem to solve before you have an AI problem to solve.

The question to answer: what integration capabilities do you already have, and which patterns can build on them without replacing them?

Variable 4: Team Maturity and Observability Tooling

Multi-agent systems create observability challenges that traditional enterprise monitoring wasn't designed for. How much retooling you need depends on which pattern you pick.

Generator-verifier and orchestrator-subagent are the easiest to monitor. They're linear enough that traditional APM tools can track the execution sequence without much extra work.

Agent teams require process-level monitoring across multiple workers running at the same time. You need to know when each worker started, what it picked up, what it produced, and when it finished, all in parallel. If your team can't monitor and debug concurrent processes today, they'll need new tooling before production.

Message bus requires event correlation across the full pipeline. When an alert comes in and triggers five different agents downstream, you need to connect the dots between the original event and everything it caused. That takes purpose-built correlation tooling, not just log aggregation.

Shared state has the steepest observability requirement of all five patterns. You need a live view of what each agent is reading and writing, plus the ability to replay state changes to understand how the final output was produced. If you can't answer "why did the system produce this result" by replaying the sequence, you're not ready.

The question to answer: what level of observability does your team have right now, and what can you realistically build before going to production?

A Framework for Selecting Right Pattern

Most decision frameworks hand you a flowchart: if X, pick Y. That doesn't work here. The same technical requirement can point to different patterns depending on your governance rules, your infrastructure, and how experienced your team is.

Instead, run your options through four filters in order. Each one eliminates patterns that won't work for you. Whatever survives all four is your starting point.

Step 1: What does governance require?

Start here. This filter can rule out patterns before anyone writes a line of code.

If you need a full audit trail showing every decision and the reasoning behind it, focus on generator-verifier or orchestrator-subagent. Rule out message bus and shared state unless your engineering team can build complete logging and state versioning before deployment, not after.

If you're in a regulated industry and need to explain your system's outputs to external auditors, only use shared state if you can track the full write history of every store, with timestamps and agent identification on every update.

If best-effort output is acceptable, all five patterns are still on the table.

Step 2: What does your business do in case of partial failure?

If a wrong answer is more dangerous than no answer (legal, financial, medical, regulatory contexts), use generator-verifier with a hard stop. Build escalation paths for every scenario where the generator hits its retry limit and still can't pass verification.

If incomplete output is still useful but needs a human to review it before it goes further, use orchestrator-subagent. Build the orchestrator to recognize partial successes and flag what's missing.

If every single piece of data flowing through the pipeline must be processed and accounted for, don't use a basic message bus. Events can vanish without anyone noticing. Either add dead letter queuing to catch unprocessed events, or switch to orchestrator-subagent with full task tracking so the orchestrator knows the status of every piece of work it delegated.

Step 3: What is supported by your existing infrastructure?

If you're already running event-driven architecture (Kafka, EventBridge, Service Bus), message bus will be cheap to operate. Your team already knows the model.

If your environment runs on batch processing (nightly loads, scheduled jobs, weekly reports), agent teams or orchestrator-subagent will fit cleanly. These patterns work well with defined batch boundaries.

If your data layer has strong access controls and clear ownership, shared state will work. If it doesn't, shared state will create governance problems before it creates any AI value.

Step 4: What can your team actually manage?

If your AI team is new and your observability tooling is basic, start with generator-verifier or orchestrator-subagent. Both are linear enough to monitor with standard tools.

If your team has moderate experience and process-level monitoring in place, agent teams are manageable.

If your team has advanced capabilities with full event tracking and correlation tooling, message bus works.

If your team can do state monitoring with real-time replay, shared state works. Without replay, debugging shared state in production is close to impossible.

Pattern | How it Works | Enterprise Sweet Spot | Where it breaks |

|---|---|---|---|

Generator-Verifier | Agent A creates; Agent B checks against criteria; loops until pass. | Contract generation, legal reviews. | The verification rules go stale because no human is responsible for updating them. |

Orchestrator-Subagent | Central agent breaks down tasks, delegates, and synthesizes. | Quarter-end financial close, familiar delegation structures. | Teams recreate their own org chart in agent form; breaks when sub-agents need to talk to each other. |

Agent Teams | Multiple agents run in parallel over long periods, each one building deep knowledge of its area. | Regional supply chain management. | Two agents write to the same shared resource at the same time. Without collision rules, you get conflicting data |

Message Bus | Agents communicate via a common event layer (pub/sub). | Compliance monitoring, scalable pipelines. | Silent failure (a dropped message goes unnoticed without strict dead-letter queues). |

Shared State | Agents read/write directly to a common store without a boss. | Complex financial audits, deep collaborative research. | Infinite loops without strict termination/convergence criteria. |

The result of this process is not a decision. It is your starting pattern. The pattern that passes through all four filters is your starting point, but it is not your endpoint.

How to Evolve Without Starting Over

Nobody is going to shut down a system that processes hundreds of transactions a day for three months while the architecture gets rebuilt. That's not how enterprise works. The good news is that these five patterns were designed to be combined, not swapped out wholesale.

Most common evolution: from the Orchestrator-Subagent to the Message Bus

This is the transition most teams will hit first as their agent count grows. It starts slowly. The orchestrator picks up more and more routing logic: send this type of task to this subagent, send that type to another. At some point, the orchestrator is spending most of its time deciding where work should go instead of managing how it gets done. That's the signal. The routing job has outgrown the orchestrator. What you actually need is a message bus.

Migration process:

Start by documenting every routing decision the orchestrator currently makes. Map out every conditional branch: if this type of task, send it here; if that type, send it there.

Build the messaging layer alongside the existing orchestrator. Don't remove anything yet.

Pick one route and move it to the message bus. Run both paths at the same time: the orchestrator's original logic and the new bus route. Compare their outputs.

If the results match and event logging works on the new path, cut that route from the orchestrator. It now runs through the bus.

Repeat for the next route. Then the next. Keep going until the only work left in the orchestrator is pulling results together, not deciding where tasks go.

At that point, the orchestrator has become just another event consumer on the bus. Migration done.

Expect this to take three to four months for a system with average routing complexity. The entire process runs in production because nothing gets replaced all at once. Every route moves independently, one at a time.

The second common evolution: Orchestrator-Subagent to Agent Teams

You'll notice this one when your subagents keep doing the same setup work over and over. Every time a job finishes, the agent resets. Every time a new job starts, it reloads the same context it just threw away. When that reload cost starts adding up, it's time to make those agents persistent. Let them keep what they've learned between jobs instead of starting from scratch each time.

How to Migrate:

Start by finding the subagents that reload the same context every time they run. Those are your candidates.

Give each candidate a simple state store: a persistent context file that loads on startup and saves on exit. The agent picks up where it left off instead of rebuilding its understanding from scratch.

Run those agents as persistent workers instead of spinning them up fresh each time. Check whether the persistent context actually improves their accuracy. If it doesn't, the migration isn't worth the added complexity.

Once the persistent workers are running reliably, add a task queue to distribute jobs across them. At that point, they're no longer subagents waiting for the orchestrator to call them. They're a team pulling work from a shared queue.

Migrate other subagents the same way as the workload justifies it.

The third evolution: Introducing Shared State into an existing pattern

This one doesn't require throwing out your current architecture. Shared state can be layered on top of whatever coordination pattern you're already running, applied only to the parts of the process where agents need to share knowledge directly.

Look for the spots in your process where agents exchange information through a middleman that adds latency but no value. The orchestrator or coordinator passes data from agent A to agent B, but doesn't transform it, filter it, or make any decisions about it. It's just a relay.

Isolate those handoffs. Create a shared state store for them and let the agents read and write directly. Keep the coordinator for everything else. You end up with a hybrid: coordinated where coordination adds value, direct where it doesn't.

The hybrid that most mature enterprises land on: orchestrator-subagent for general workflows, shared state for the parts that need tight collaboration between agents.

One rule for all migrations: don't change patterns across an entire process at the same time. Move one path. Verify it works. Lock it down. Then move the next one. Teams that try to migrate everything in parallel quickly discover that coordinating a full-process migration is itself a multi-agent problem.

Conclusion: Start Small, but Start on Purpose

The most important word in enterprise AI architecture isn't intelligence, autonomy, or scalability. It's intentionality.

Most multi-agent systems at enterprises don't fail because the technology isn't ready. They fail because the team picked a pattern out of habit instead of choosing one by design. An orchestrator-subagent setup that mirrors the org chart. A message bus because the infrastructure team already knows Kafka.

The framework in this piece won't make the decision for you. What it will do is force you through the right questions in the right order. What does governance require? What happens on failure? What does your current infrastructure support? What can your team actually operate and observe?

Those answers will narrow five patterns down to one or two. Pick the simpler one. Build observability into the first deployment, not as a follow-up ticket that keeps getting pushed to next sprint.

Watch where it fails. Adjust.

The enterprises that get this right aren't running the most sophisticated multi-agent systems. They're running the most appropriate ones. And they can tell you exactly why they chose what they chose.

That's the kind of AI capability that compounds.

Resources

- Anthropic: Multi-agent coordination patterns — The technical source, published April 10, 2026

- Anthropic: Building multi-agent systems — when and how to use them — Prerequisite reading on when multi-agent investment is justified

- Claude Code documentation — Orchestrator-subagent in production practice