Hi everyone 👋

Welcome back to this week's issue

When the co-founder and CTO of arguably the most ambitious AI company in the world gets up on a stage and proclaims that they are throwing in the towel on what has come to be known as the 'USB-C for AI,' the entire developer community sits up and takes notice."

This is precisely what happened on March 11, 2026, when Perplexity's Denis Yarats took the stage at the Ask 2026 conference and dropped what could only be described as a bombshell: Perplexity is abandoning the Model Context Protocol, at least within the organization, in favor of traditional APIs and CLIs. This has, of course, sent shockwaves throughout the AI community, reigniting a conversation that has quietly been building for months.

Is MCP overhyped? Is the CLI the unsung hero that everyone needs? And when should you, in fact, turn to a traditional API? This article will delve into all three, as well as what precisely Perplexity discovered, and provide a clear and concise comparison for you to make your own decisions.

The Moment That Started the Debate

To understand the context of the debate, you must first understand the promise of MCP. Anthropic launched the Model Context Protocol in late 2024 as a way for developers to connect external tools and data sources with their artificial intelligence models.

The promise was simple: Instead of developers coding up a connection between their AI agent and a GitHub repository, a Slack workspace, a database, or a file system, you'd deploy a single MCP server, and any MCP-compliant agent could use it.

MCP took off quickly, with 97 million monthly SDK downloads and over 5,800 verified servers as of December 2025, after which MCP was donated to the Linux Foundation as a new Agentic AI Foundation, with OpenAI, Google, Microsoft, AWS, and Cloudflare as founding members. Perplexity itself released a verified MCP server in November 2025.

However, MCP's production reality check came soon after.

The key statistic that kicked off the whole debate: MCP can use up to 72% of an agent's context window before even processing a single user message.

This statistic came from a real-world Apideck deployment with just three MCP servers: GitHub, Slack, and Sentry, which loaded 40 tool definitions and and consumed 143,000 of 200,000 available tokens. That left only 57,000 tokens less than a third for the actual work.

Important nuance: that 72% figure is a worst-case scenario from one specific deployment with no lazy-loading or schema pruning. It's not Perplexity's own measurement. But it reflects a real structural problem in how MCP naively loads tool schemas.

Yarats pointed to two main issues: context window overheads and authentication friction. Auth management for multiple MCP servers now becomes an operational overhead: another auth boundary for another new tool. And for a company with production agents at scale, these costs add up fast.

So, Perplexity's answer to this problem was to launch their Agent API: a single REST endpoint that serves models from OpenAI, Anthropic, Google, xAI, NVIDIA, and more, with some useful tools like web search included, all under a single API key. No schema overheads. No server authentication. Just a nice, traditional API that deals with all that complexity behind the scenes.

This was a pragmatic decision rather than a philosophical one. Perplexity still operates their MCP server for external developers and desktop tools: after all, for some use cases, MCP still makes sense!

And they weren't alone. Y Combinator CEO Garry Tan opted to build his own CLI rather than using MCP, citing reliability and speed as factors. Cloudflare published a teardown on the technical aspects of their work, where they were able to reduce the usage of tokens by 244x, from ~244,000 tokens to describe 2,500 API endpoints, to around 1,000 tokens using their 'Code Mode'.

What Is MCP, Really?

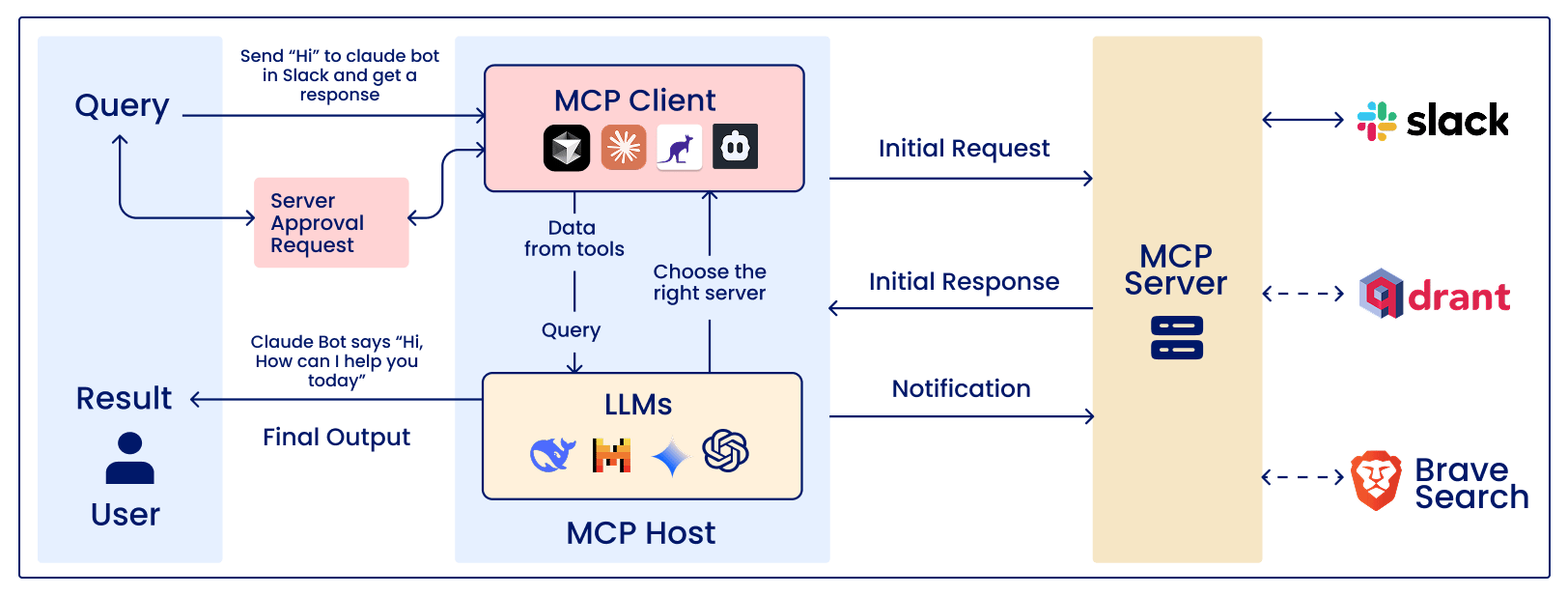

MCP stands for Model Context Protocol, which is a client-server framework intended for standardizing the use of tools by artificial intelligence agents. A MCP server presents a set of tools, which might include a search tool, a database tool, a file tool, or an API tool, and a MCP client, which could be your artificial intelligence application, uses these tools.

MCP can be defined as a common language for artificial intelligence agents and tools. Without a common language, integrating tools with artificial intelligence agents can be a lot of work. With a common language, a tool vendor can implement the language once, and all agents can use the tools without additional coding.

How it works under the hood

When the client of the MCP makes a connection to the server, the discovery handshake is performed, where the server sends out information about the tools it makes available, including the name, description, parameter schema, and return format of the tool.

The tool call itself follows the request-response pattern, where the model decides to call the tool, makes the call, the tool is executed by the MCP server, and the result is returned to the model.

Note that the tool call itself does not return to the context window; the result of the tool call does.

Where MCP shines

IDE and desktop tool integrations (Claude Desktop, Cursor, VS Code)

Open ecosystems where pluggability matters — connect once, use everywhere

Rapid prototyping and local development environments

Scenarios where dynamic tool discovery is genuinely needed

Where MCP struggles

High-scale production systems where token costs multiply across thousands of requests

Enterprise deployments requiring strict security, compliance, and auth boundaries

Systems where most available tools will never actually be called

Environments where horizontal scaling and stateless routing are required

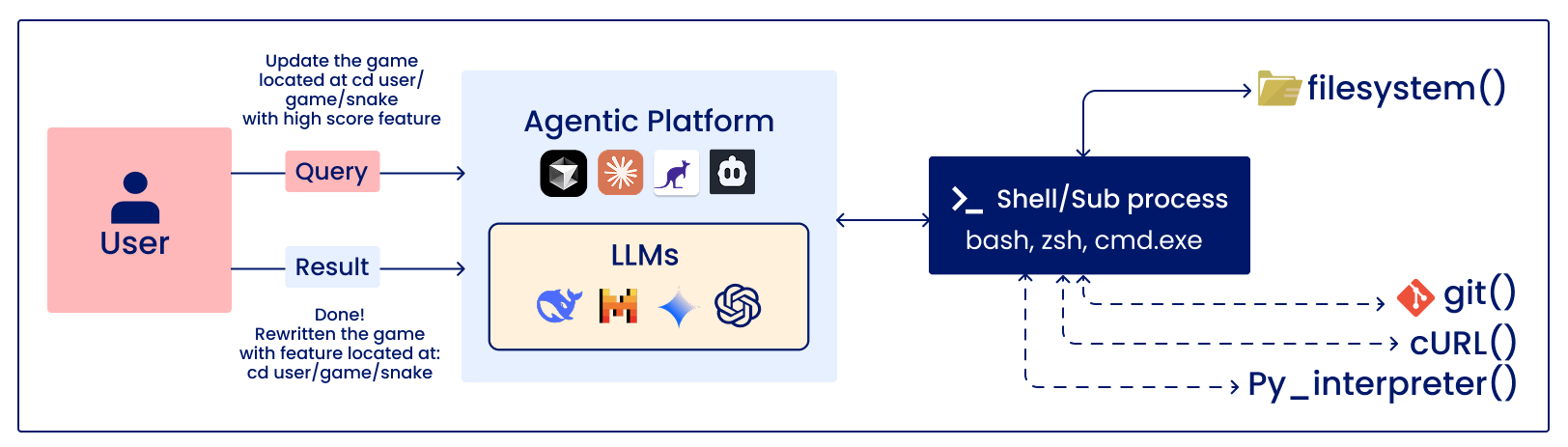

What is a CLI Tool Calling?

CLI tool calling: the old-school approach that never really went away and is quietly making a comeback in the stack for AI agents.

No protocol layer; your AI agent calls command-line tools directly: running scripts, executing binaries, calling system utilities, and piping results.

In the context of an AI agent, this generally means the model creates a command-line call or small script, an environment runs the call, and the results are fed back into the model.

Apideck demonstrated an extreme case of this, where MCP's tens of thousands of context tokens were replaced with an 80-token CLI agent prompt, which used a technique called progressive disclosure: only fetch tool details when you actually need them, not before.

The basic benefit: progressive disclosure

MCP has the default behavior of loading everything at once, but the CLI agent can be implemented to load the information of the tool lazily, i.e., the model can be told of the existence of the tool in a few tokens, and the schema of the tool can be loaded only if the model decides to use the tool. The benchmarks run by Scalekit showed that CLIs were between 10 to 32x cheaper than MCP and had 100% reliability as opposed to the 72% reliability of MCP.

Where CLI tool calling shines

Automation pipelines and scripting workflows with a fixed, known tool set

Situations demanding maximum token efficiency and lowest latency

Production agents where reliability and observability are paramount

Developer tools and local environments — the Unix philosophy plays well here

Where CLI tool calling struggles

Cross-system interoperability — every integration is custom-built

Ecosystems where third-party tool discovery matters

Environments without reliable shell access or sandboxing

Teams without strong DevOps/infrastructure discipline to manage scripts at scale

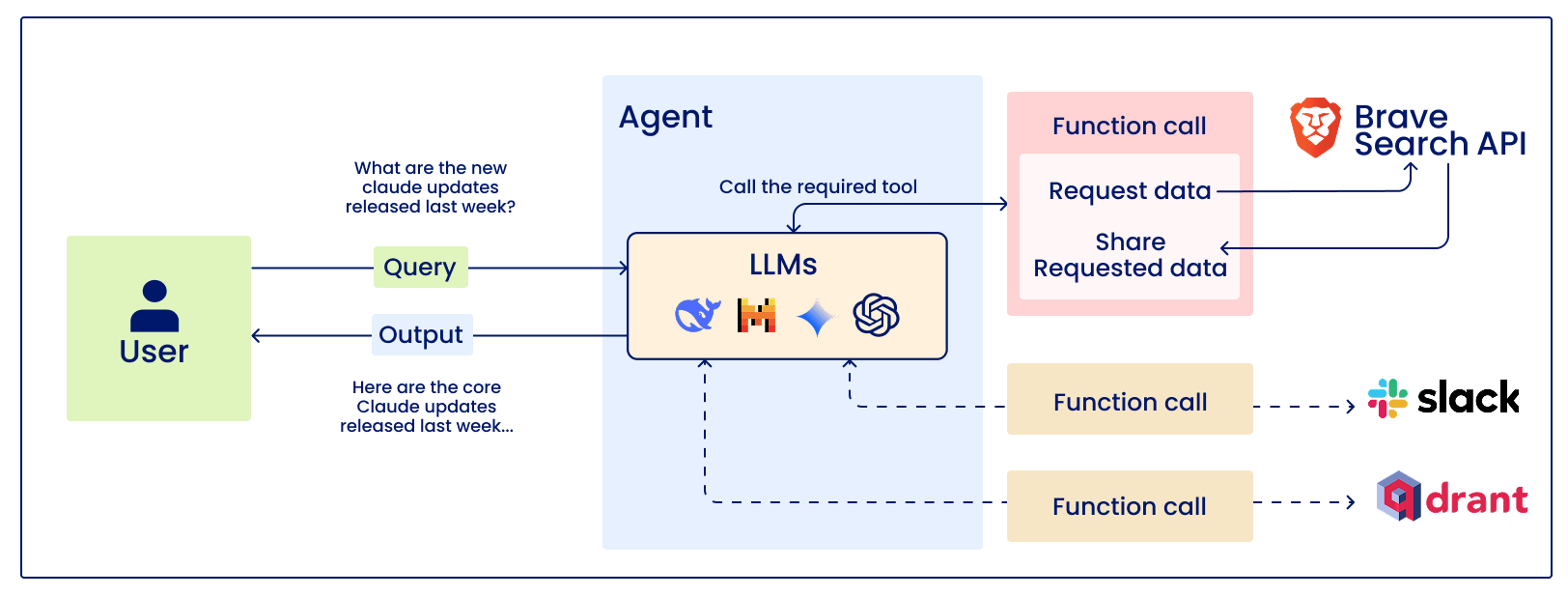

What Is Traditional API Calling?

The foundation of the modern web is traditional API calling: REST endpoints, JSON bodies, OAuth tokens, and HTTP. As an API agent, this means that the model is provided with the necessary information to perform a set of specific HTTP requests.

This is what Perplexity was moving towards with their Agent API: one endpoint to rule them all, routing internally to multiple models and tools. The agent developer does not need to think about MCP servers and CLI scripts; they simply need to call one API with one key.

The agent API is a single endpoint to rule them all.

The main advantage: simplicity and tooling that has been battle-tested for decades

APIs have decades of tooling behind them: standard logging, distributed tracing, rate limiting, circuit breakers, SLAs, and retries. Every DevOps team already knows how to monitor and operate them. There's no protocol overhead, no schema discovery step, no multiple auth boundaries. You authenticate once, you call the endpoint, you get the result.

Where traditional APIs shine

Production systems where predictability, cost, and reliability are non-negotiable

Enterprise environments with existing API governance and security frameworks

Well-defined workflows where the tool set is stable and known in advance

Teams that want minimal infrastructure complexity

Where traditional APIs struggle

Dynamic, open-ended agents that need to discover and adapt to new tools

Scenarios requiring interoperability across multiple AI providers without custom code

Prototyping environments where speed of integration matters more than optimization

Head-to-Head: MCP vs CLI vs Traditional API

Criteria | MCP (Model Context Protocol) | CLI Tool Calling | Traditional API Calling |

|---|---|---|---|

Token Efficiency | Low - loads all schemas upfront (can use up to 72% of context window) | High - around 80 tokens vs thousands | High - no schema overhead |

Setup Complexity | Medium - requires MCP server and per-tool auth configuration | Low - just scripts or binaries you already have | Low - standard HTTP calls, well-documented |

Interoperability | High - universal standard with 5,800+ servers and growing | Low - custom glue code needed per workflow | Medium - each API has its own conventions |

Production Readiness | Evolving - auth and scaling patterns still maturing | Proven - battle-tested shell scripting | Proven - decades of reliable tooling |

Security / Auth | Complex - multiple auth boundaries to manage | Direct - leverages OS-level permissions | Clean - single auth flow per endpoint |

Observability | Limited - often requires custom logging implementation | Native - OS logs, process traces, stderr/stdout | Standard - HTTP metrics, logs, APM-ready |

Best For | Dev tools, IDE integrations, open/plugin ecosystems | Automation scripts, local workflows, fast prototypes | Production APIs, enterprise systems, scale |

Worst For | Cost-sensitive, high-scale production workloads | Cross-system orchestration, dynamic tool discovery | Rapidly changing toolsets, plug-and-play discovery |

So, is MCP Dead?

No, MCP is certainly not dead, and the whole 'MCP is dead' idea does not capture the actual story. MCP is not dead; in fact, MCP is growing or maturing. MCP has institutional support from the Linux Foundation, OpenAI, Google, Microsoft, and AWS. Claude Code launched Tool Search for lazy loading in v2.1.7, which can reduce context waste by as much as 98.7%. Cloudflare has also deployed remote MCP servers at scale. AWS launched stateful MCP features in 14 regions on March 10, 2026, which is a day before Yarats' announcement.

What is changing is the naive assumption that MCP is the default answer to everything. This was always a false assumption, and the environment is now honest enough to admit it.

The real takeaway: the industry is converging on a pragmatic hybrid. MCP for desktop and IDE tools where discoverable pluggability matters. Traditional APIs and CLIs for production agents where token economics and security are paramount

The decision of Perplexity is an optimization decision, not a philosophical decision. They are still serving the developer community through an MCP server. However, for their own systems, where cost, scalability, and security are the main variables, traditional APIs and CLIs are just better for now.

The tension is not "MCP vs APIs" — it's understanding which one is solving which problem. MCP's tool discovery mechanism is actually a good one. "MCP's default 'load everything upfront' implementation pattern is not a good one... That's an engineering discipline issue, and solutions exist for it."

How to Choose: A Decision Framework

Choose MCP if…

• You are building tools for IDEs, desktop apps, or developer environments

• Cross-AI-provider interoperability is a real need

• You have a dynamic tool set that needs to be discoverable

• You are prototyping or building in an open ecosystem

• You implement lazy loading – never use naive upfront schema loading

Choose CLI Tool Calling if...

• Token efficiency is your primary constraint

• You have a fixed, known set of tools

• You need maximum reliability and speed

• You have strong shell/script infrastructure in place

Choose Traditional API Calling if...

• You are building production systems

• You need predictable authentication, visibility, and operational tooling

• You have a stable tool set – no need for dynamic discovery

• You are building for enterprise clients that need strong security and compliance

Consider Hybrid if….

This is where most production teams will end up: MCP for the developer-facing layer (IDE integrations, local tooling), and traditional APIs or CLIs for the production agent infrastructure. This is also reflected in our own architecture: MCP on the outside for devs, clean APIs on the inside for scale.

Conclusion

Perplexity’s announcement represents a healthy sign for the ecosystem as a whole, The peak of the hype cycle, where a single technology would solve all integration problems for all use cases at all scales

MCP addressed a genuine problem: "chaos of bespoke tool integrations for AI." However, it created new ones at scale: context overhead, auth complexity, and operational gaps that aren't fully addressed in its current spec. CLIs address token efficiency but sacrifice interoperability. Traditional APIs address scale and reliability but force you to build your own orchestration.

The answer has always been "the one that fits your context." What's new in 2026 is that we've gained enough production experience to know what that actually means and the teams brave enough to say it out loud.

Resources & Further Reading

MCP 2026 Roadmap (Official) → blog.modelcontextprotocol.io/posts/2026-mcp-roadmap/

Scalekit: MCP vs CLI Benchmarks (10-32× cheaper, 100% reliability) → scalekit.com/blog/mcp-vs-cli-use

Apideck: The 72% Context Window Problem → apideck.com/blog/mcp-server-eating-context-window-cli-alternative

Repello: Perplexity Ask 2026 Analysis → repello.ai/blog/mcp-vs-cli

Anthropic: Claude Code Tool Search (Lazy Loading) → anthropic.com/engineering/code-execution-with-mcp

Teleport: MCP Enterprise Security Gaps → goteleport.com/blog/complicating-mcp-enterprise/

Thanks for reading.

— Rakesh's Newsletter